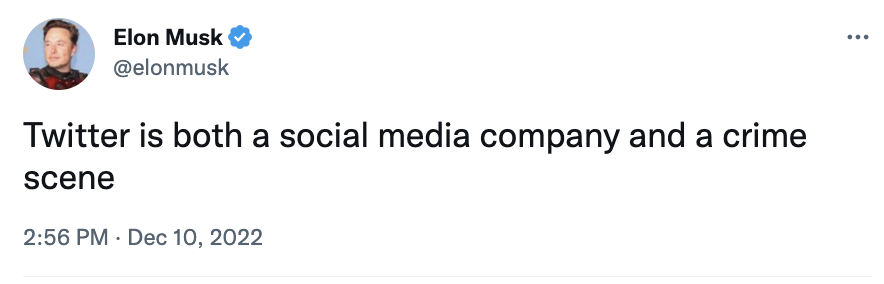

On Saturday, Elon Musk tweeted that the social media site he owns is a crime scene.

I’m pretty sure his confession to owning and running a crime scene was not intended as an invitation for the Securities and Exchange Commission to mine the site for evidence that Elmo engaged in one or several securities-related violations in conjunction with his purchase of it. (As I’ll get to, Elmo’s claim that his own property is a crime scene may, counterintuitively, be an attempt to stave off that kind of investigative scrutiny.)

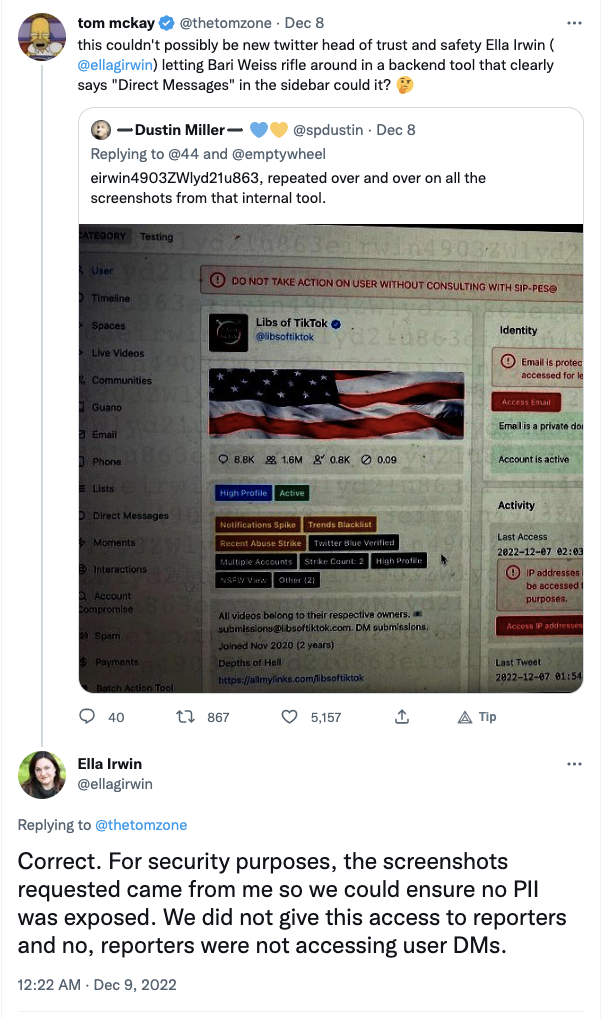

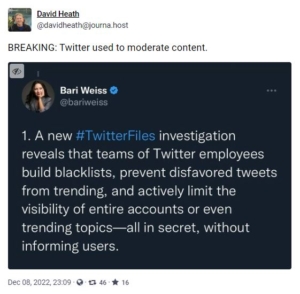

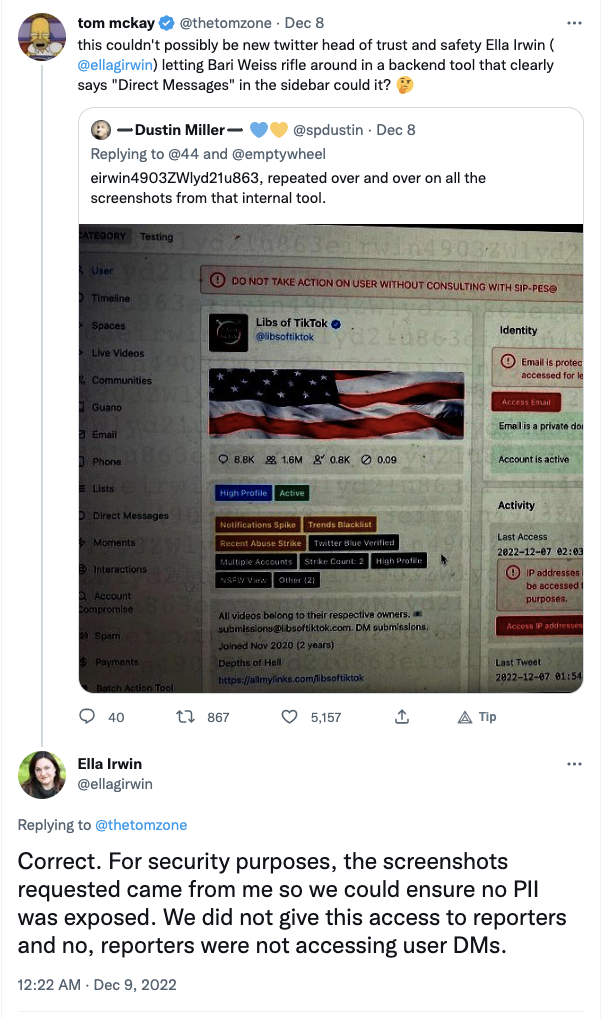

Similarly, he probably wasn’t boasting that the Federal Trade Commission and a bunch of European regulators are investigating how Elmo’s recklessness has violated his users’ privacy. He cares so little about that, his newly installed head of Twitter Safety, Ella Irwin, confirmed she was spending her time in charge of a woefully gutted department sharing private user data with one of the mouthpieces Elmo has gotten to rifle through Twitter documents. Worry not, though: Irwin deemed sharing the moderation history of three far right activists — and the control panel used for moderation — not to be a security or privacy risk.

Likewise, I’m virtually certain Elmo didn’t mean to boast that San Francisco has started cataloguing the beds he had installed at Twitter headquarters so he can flog his (often H1B-captive) engineers to work round the clock.

Given what has come out of the “Twitter Files” project so far, not to mention the number of coup-conspirators Elmo has welcomed back on the platform, I assume he doesn’t mean to emphasize that Twitter is one of the key sources of evidence about the failed January 6 coup attempt, even against — especially against — the coup instigator. On the contrary, Elmo has invited a bunch of pundits to write long breathless threads about the ban of Trump’s account that entirely leave out what happened on January 6. Here too, then, Elmo may be trying to undercut a known criminal investigation by labeling his social media site a crime scene.

No.

When Elmo says Twitter is a crime scene, he’s not imagining federal investigators swarming his joint to collect evidence that would be introduced in a legal proceeding according to the Rules of Criminal or Civil Procedure.

Indeed, a central part of the breathless Twitter Files project involves insinuating, at every turn, malice on the part of either law enforcement (often the FBI) or other federal organizations mislabeled as law enforcement (like the Cybersecurity and Infrastructure Security Agency, CISA, which is part of DHS), even while presenting evidence that disproves the allegations being floated. That’s what Matt Taibbi — whom I will henceforth refer to as #MattyDickPics for his wails that the DNC succeeded in getting removed nonconsensually posted dick pics — some of which were part of an inauthentic campaign that Steve Bannon chum Guo Wengui pushed out. (Side note: my Tweet linking to MotherJones’ story on the Guo Wengui tie, which shows that these tweets were doubly violations of Twitter’s Terms of Service, got flagged by Twitter as “sensitive content.”)

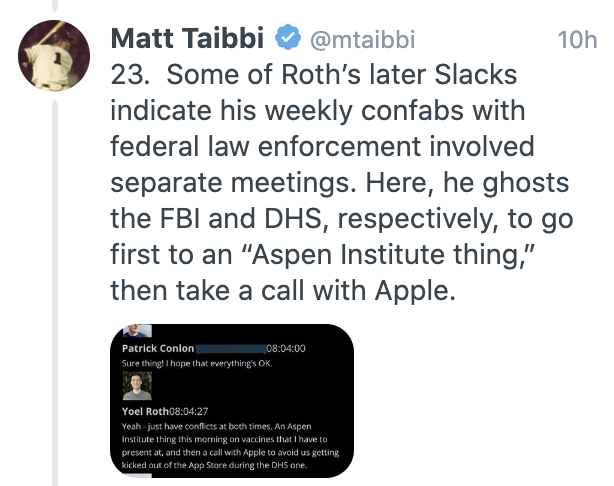

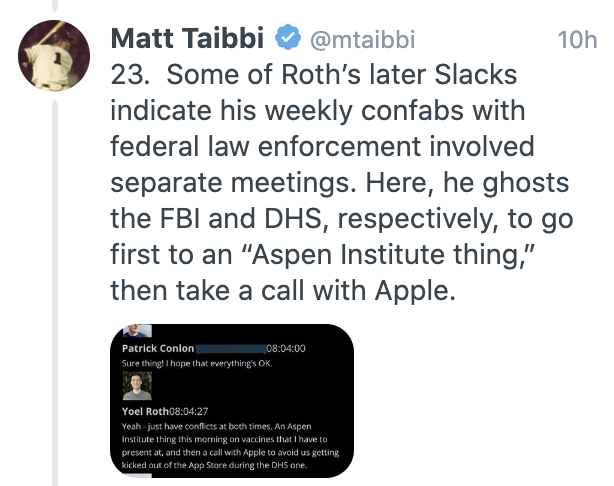

In one attempt to prove that former head of Twitter Safety Yoel Roth was too close to law enforcement, for example, MattyDickPics showed that Roth didn’t have weekly meetings pre-scheduled, and therefore could get blown off in favor of the Aspen Institute or Apple.

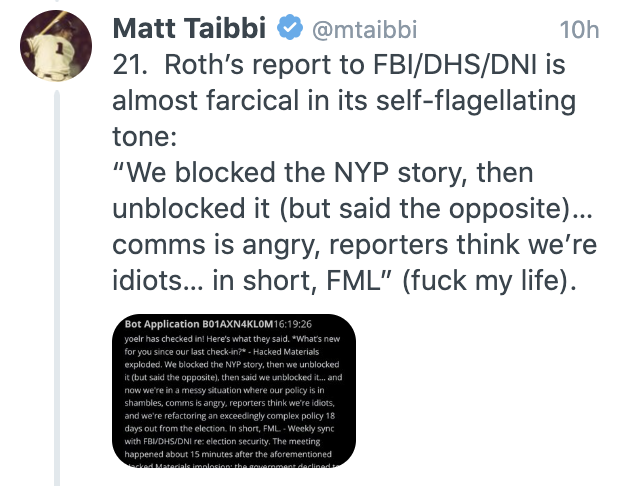

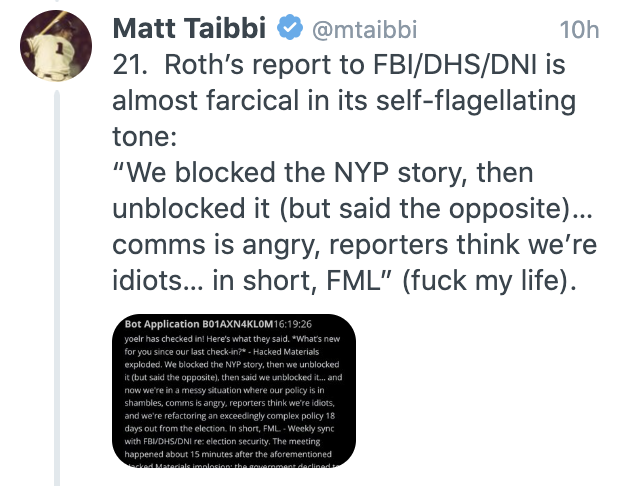

In another, Matty showed Roth writing to what appears to be an internal Slack, but claiming it was a “report to FBI/DHS/DNI,” about Twitter’s Hunter Biden response. Taibbi has discovered something genuinely newsworthy: Per Roth, when he asked about the “Hunter Biden” “laptop,” the government declined to say anything useful.

Weekly sync with FBI/DHS/DNI re: election security. The meeting happened about 15 minutes after the aforementioned Hacked Materials implosion; the government declined to share anything useful when asked. [my emphasis]

This entire campaign largely arose out of suspicion that the FBI was ordering Twitter to take action to harm Trump (or undermine the Hunter Biden laptop story). Matty here reveals that not only did that not happen, but when Twitter affirmatively asked for information, “the government declined to share anything useful.”

This is one of those instances where the conclusion should have been, “BREAKING: We were wrong. FBI did not order Twitter to kill the Hunter Biden laptop story.” Instead, Matty labels this a “report to” the government, not a “report about” a meeting with the government. And he says absolutely nothing about the evidence debunking the theory he and the frothy right came in with.

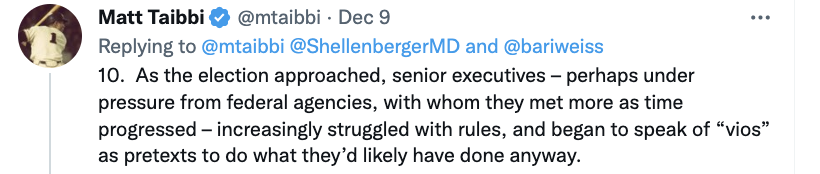

Instead, Matty makes a big deal out of the fact that, “Roth not only met weekly with the FBI and DHS, but with the Office of the Director of National Intelligence (DNI).” Reminder: At the time, DHS was led (unlawfully) by Chad Wolf. ODNI was led by John Ratcliffe. And one of Ratcliffe’s top aides was Trump’s most consistent firewall, Kash Patel. Roth may have been meeting with spooks, but he was meeting with Trump’s hand-picked spooks.

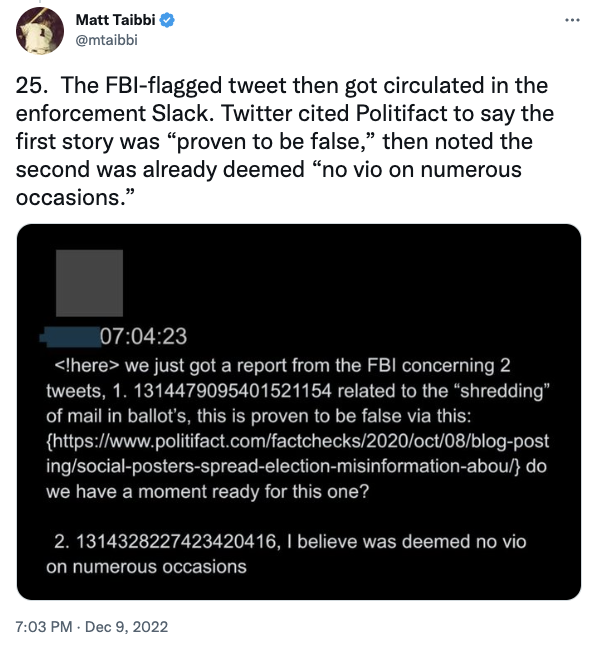

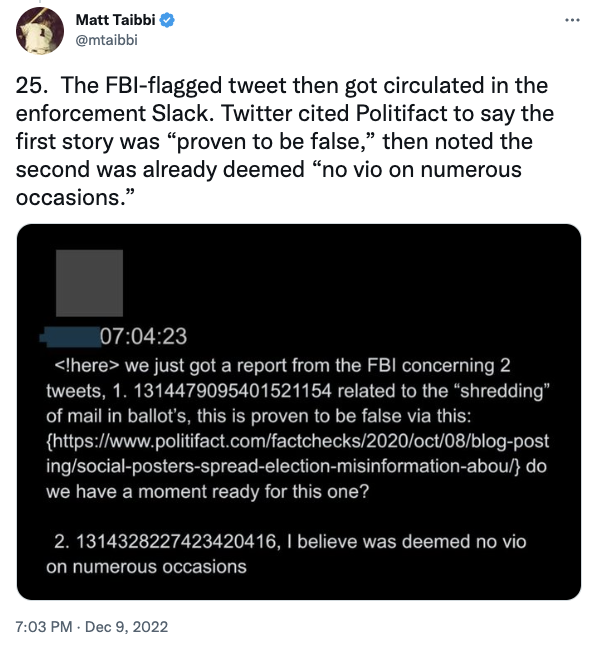

In another fizzled pistol, Matty shows Twitter responding to two reported Tweets from the FBI (without describing the basis on which FBI reported them) and in each case, debunking any claim that the Tweets were disinformation.

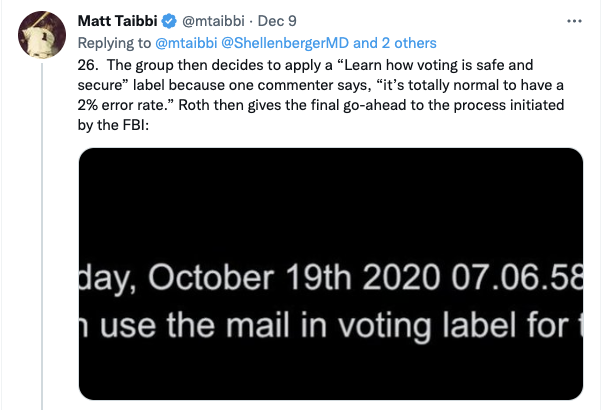

Matty complains that Twitter applied a label reassuring people that voting is secure. This is either just gross cynicism about efforts to support democracy, or a complaint that Twitter refused to institutionally embrace conspiracy theories. Whichever it is, it amounts to a complaint that Twitter tried to protect the election.

Perhaps my favorite example is where Matty, who is supposed to be showing us what happened between the Hunter Biden laptop moment and when, after Trump attempts a coup, Twitter bans him, instead shows us Slacks that post-date January 6. He provides no date or any other context. He shares these, he says, because they are an example of a Twitter exec “getting a kick out of intensified relationships with federal agencies.” They show Roth joking about how he should document his meetings.

Matty provides no basis for his judgment that this shows Twitter execs “getting a kick out of intensified relationships with federal agencies.” It’s even possible that Roth was claiming this was an FBI meeting the same way people name their wifi “FBI surveillance van,” as a joke. This is the kind of projection of motive that, elsewhere, Matty complains about Twitter doing (I mean, I guess he counts as Twitter now!), but with literally no basis to make this particular interpretation.

Honestly, I wish Matty had committed an act of journalism here — had at least provided the date of these texts! — because these texts are genuinely interesting.

It’s highly unlikely, though, that Roth is worried about documenting that he had meetings with the FBI, and Matty has already shown us why that’d obviously be the case. As Matty has shown, Roth had weekly meetings with the FBI on election integrity and monthly meetings on criminal investigations. He listed those meetings with the FBI as meetings with the FBI.

Yoel Roth was not afraid to document that he had meetings with the FBI, and Matty, more than anyone, has seen proof of that, because this breathless thread is based on Roth documenting those meetings with the FBI.

One distinct possibility that Matty apparently didn’t even consider is that, in the wake of the coup attempt, Roth had meetings with law enforcement, including the FBI, that were qualitatively different from those that went before because … well, because Twitter had become a crime scene! Consider the possibility, for example, that FBI would need to know how Trump’s tweets were disseminated, including among already arrested violent attackers. It was evident from very early in the investigation, for example, that Trump’s December 19 Tweet led directly to people planning, among militia members and totally random people on the Internet, to arm themselves and travel to DC. Or consider the report in the podcast, Finding Q, that only after January 6 did the FBI investigate certain aspects of QAnon that probably could have been investigated earlier: Twitter data on that particular conspiracy would likely be of interest in such an investigation. Consider the known details about how convicted seditionists used Trump’s tweets in the wake of the failed coup attempt in discussions of planning a far more violent follow-up attack.

Matty, for one, simply doesn’t consider whether Elmo’s observation explains all of this: that Twitter had become a crime scene, that the FBI would treat it differently as Twitter became a key piece of evidence in investigations of over 1,200 people.

None of this shows the “collusion” with the Deep State that Matty is looking for. Thus far, it shows the opposite.

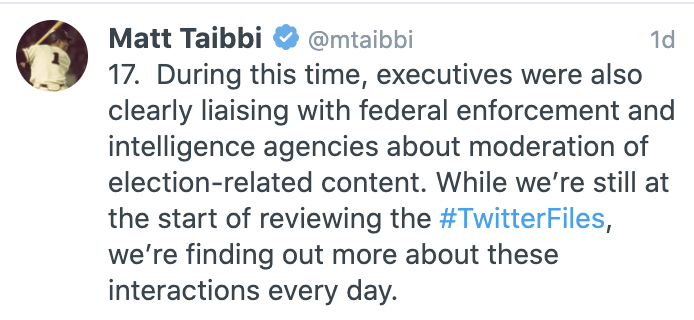

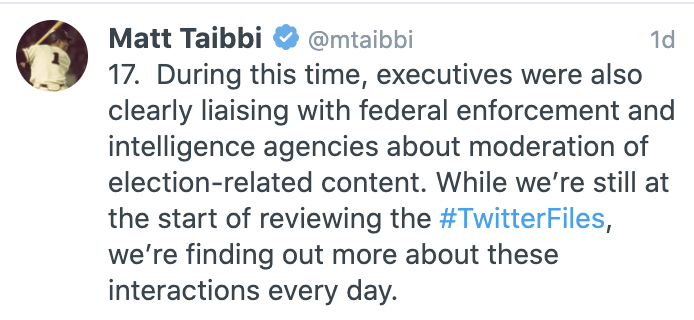

Which may be why, close to the beginning of this particular screed, Matty explained (as he did about several other topics), that he was making grand pronouncements about Twitter’s relationship with law enforcement (and non-LE government entities like CISA) even though, “we’re still at the start of reviewing” the records.

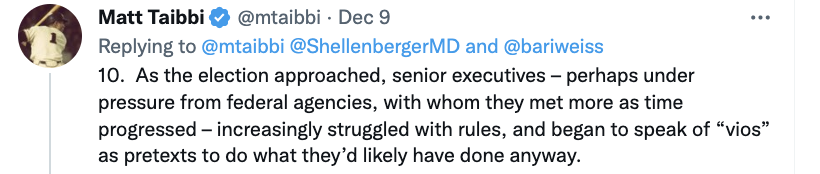

Seven Tweets before he made that admission — “we’re still at the start of reviewing” these files — Matty insinuates, in spite of what his thread would show turned out to be evidence to the contrary — that Twitter struggled as Trump increasingly attacked democracy “perhaps under pressure from federal agencies.”

He and his fellow-Elmo mouthpieces have reached their conclusion — that Twitter did what it did “perhaps under pressure from” the Feds, even though they’ve only started evaluating the evidence and what evidence they’ve shown shows the opposite.

This is, nakedly, an attempt to attack the Deep State, to invent claims before actually evaluating the evidence, even when finding evidence to the contrary.

I mean, Matty is perfectly entitled to fabricate attacks against the Deep State if he wants and Elmo has chosen to give Matty preferential access to non-public data from which to fabricate those attacks. But it certainly puts Elmo’s claim that his site is a crime scene in different light.

Elmo has chosen a handful of people, including Matty and several others with records of making shit up, to confirm their priors using Twitter’s internal files. He’s doing so even as he threatens to crack down on anyone with actual knowledge of what went down speaking publicly. That is, Elmo is trying to create allegations of criminality based off breathlessly shared files — a replay of the GRU/WikiLeaks/Trump play in 2016 — by ensuring the opposite of transparency, ensuring only people like Matty, who has already provided proof that he’s willing to make shit up to confirm his priors, can speak about this evidence.

That’s Elmo’s crime scene.

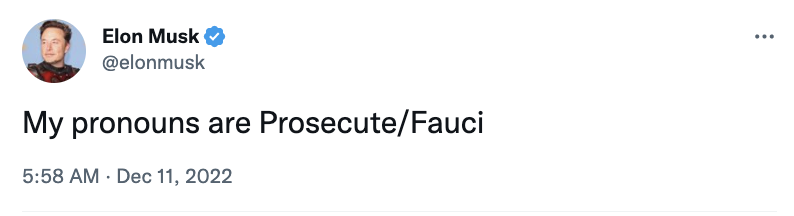

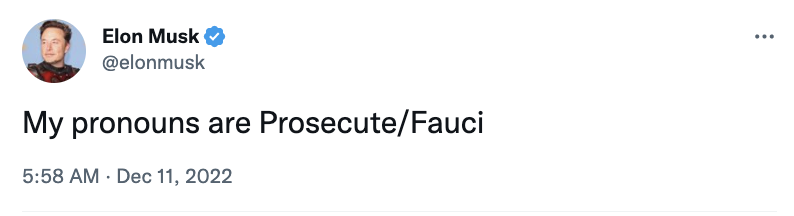

Elmo has targeted Anthony Fauci.

He fired former FBI General Counsel, Jim Baker, because Jim Baker was acting as a lawyer — and because Jonathan Turley launched an attack on Baker.

He has fabricated an anti-semitic attack on Roth, suggesting the guy who made the decision to throttle the NYPost story on “Hunter Biden’s” “laptop” is a pedophile.

These are scapegoats. Elmo is inviting House Republicans to drag them through the mud; incoming Oversight Chair James Comer has already responded with a demand from testimony for Jim Baker and Yoel Roth. Elmo has not invited law enforcement into his self-described crime scene. The mouthpieces Elmo has invited in to tamper with any evidence have, instead, speculated (in spite of evidence to the contrary) that pressure from law enforcement led people like Jim Baker and Yoel Roth to make the decisions they did.

That’s Elmo’s crime scene.

A week before Elmo announced that he hosted a crime scene, he posted this, “Anything anyone says will be used against you in a court of law,” then within a minute edited it, “Anything anyone says will be used against me in a court of law.”

Elmo’s response to buying a crime scene, used to incite an attack on American democracy, is to flip the script, turn those who failed to do enough to prevent that attack on democracy into the villains of the story. It’s a continuation of the tactic Trump used, to turn an investigation into Trump’s efforts to maximize a Russian attack on democracy into an investigation, instead, into an investigation that created FBI villains, just as Matty invented pressure from law enforcement while displaying evidence of none.

And Elmo’s doing so even while using the fascism machine he bought, which Trump used to launch his coup attempt, to incite more violence against select targets.