Debunking The Deficit Myth

Posts in this series

The Deficit Myth By Stephanie Kelton: Introduction And Index

The first chapter of Stephanie Kelton’s book The Deficit Myth takes up the biggest myth about federal government finances, the idea that federal budget deficits are a problem in themselves. The deficit myth is rooted in the idea that the federal government budget should work just like a household budget. A family can’t spend more than its income will support. The family has income, and may be able to borrow money, and the sum of these sets the limit on household spending. Those who propagate the deficit myth say government expenditures should be constrained by the government’s ability to tax and borrow. First the government has to find the money, either through taxes or borrowings, and only once it has found the money can it spend. The way things actually work is different.

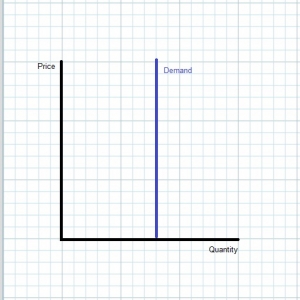

In the real world, it goes like this. Congress votes to direct an expenditure and authorize payment. An agency carries out that direction. The Treasury instructs the Fed to pay a vendor. The Fed makes the payment by crediting the bank account of the vendor. That’s all that happens. It turns out that the real myth is that the Treasury had to find the money before the Fed would credit the vendor. That’s because the federal government holds the monopoly on creating money. U.S. Constitution Art. 1, §§8, 10. In practice this power is given to the Treasury, which mints coins, and to the Fed, which creates dollars. [1]

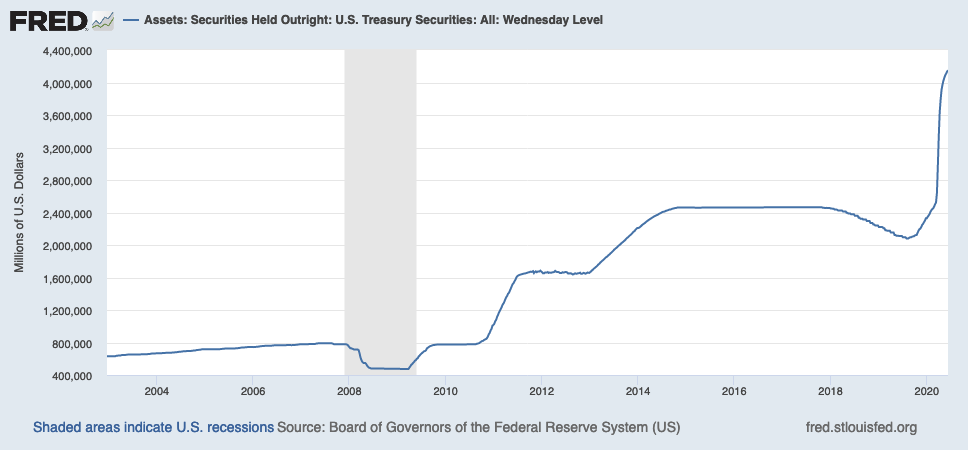

It also turns out that for the most part, the Treasury does cover the expenditure by taxing or borrowing, but because the government is an issuer of dollars, it isn’t necessary. [2] In the last few months, the Treasury has been selling securities and the Fed has been buying about 70% of them. Here’s a chart from FRED showing Fed holdings Fed holdings of treasury securities. The Fed may or may not sell those securities to third parties. If it doesn’t, they will be held to maturity and remitted as a dividend to the Treasury.

The recognition that spending comes first, and finding the money comes second is one of the fundamental ideas of MMT. Kelton describes her meeting with Warren Mosler who introduced her to these ideas; the stories are amusing and instructive. I particularly like this part:

[Mosler] began by referring to the US dollar as “a simple public monopoly.” Since the US government is the sole source of dollars, it was silly to think of Uncle Sam as needing to get dollars from the rest of us. Obviously, the issuer of the dollar can have all the dollars it could possibly want. “The government doesn’t want dollars,” Mosler explained. “It wants something else.”

“What does it want?” I asked.

“It wants to provision itself,” he replied. “The tax isn’t there to raise money. It’s there to get people working and producing things for the government.” Pp. 24-5.

Put a slightly different way, people accept the government’s money in exchange for goods and services because the government’s money is the only way to pay taxes imposed by the government. Kelton says she found this hard to accept. She spent a long time researching and thinking about it, and eventually wrote her first published peer-reviewed paper on the nature of money. [3]

The monopoly status makes governments the issuers of money, and everyone else is a user. That fundamental difference means that governments have different financial constraints than households, and that it certainly isn’t constrained by its ability to tax and borrow. Kelton offers several interesting and helpful analogies that can help people grasp the Copernican Revolution that this insight entails.

Once we understand that government doesn’t require tax receipts or borrowings to finance its operations, the immediate question become why bother taxing and borrowing at all. Kelton offers four reasons for taxation.

1. Taxation insures that people will accept the government’s money in exchange for goods and services purchased by the government.

2. Taxes can be used to protect against inflation by reducing the amount of money people have to spend.

3. Taxes are a great tool for reducing wealth inequality.

4. Taxes can be used to encourage or deter behaviors society wants to control. [4]

She explains borrowing this way: government offers people a different kind of money, a kind that bears interest. She says people can exchange their non-interest-bearing dollars for interest bearing dollars if they wish to. “… US Treasuries are just interest-bearing dollars.” P. 36. Let’s call the non-interest-bearing dollars “green dollars”, and the interest-bearing ones “yellow dollars”.

When the government spends more than it taxes away from us, we say that the government has run a fiscal deficit. That deficit increases the supply of green dollars. For more than a hundred years, the government has chosen to sell US Treasuries in an amount equal to its deficit spending. So, if the government spends $5 trillion but only taxes $4 trillion away, it will sell $1 trillion worth of US Treasuries. What we call government borrowing is nothing more than Uncle Sam allowing people to transform green dollars into interest-bearing yellow dollars. P. 36-7.

It might seem that there are no constraints, but that is not so. Congress has created some legislative constraints on its behavior, including PAYGO, the Byrd Rule, and the debt ceiling, but these can be waived, and always are if a majority of Congress really want to do something. They also serve as a useful way of lying to progressives demanding public spending on not-rich people, like Medicare For All. We have to pay for it under our PAYGO rules, they say, while waiving PAYGO for military spending (my language is harsher than Kelton’s).

The real constraints are the availability of productive resources and inflation. The correct question is not “where can we find the money”, but “will this expenditure cause unacceptable levels of inflation” and “do we have the real resources we need to do this” and “is this something we really want to do. As Kelton puts it, if we have the votes, we have the money.

In my next post, I will examine some of these points in more detail. Please feel free to ask questions or request elaboration in the comments.

=====

[Graphic via Grand Rapids Community Media Center under Creative Commons license-Attribution, No Derivatives]

[1] Art. 1, §8 authorizes the federal government to create money; §10 prohibits the states from issuing money. That leaves open, for now, the possibility that private entities can issue money. Banks and from time to time other private entities play a role in the creation of money, but I do not see a discussion of this in the book.

For those interested, here’s a discussion of the MMT view from Bill Mitchell. I may take this up in a later post. In the meantime, note that every creation of money by a bank loan is matched by a related asset. Thus, bank creation of money does not increase total financial wealth. In MMT theory this is called horizontal money. It is contrasted with vertical money representing the excess of government expenditures over total tax receipts, which does increase financial wealth. Here’s a discussion of this point.

[2] There are, of course, constraints on government spending, especially inflation and resource availability. We’ll get to that in a later post.

[3] Kelton cites the paper in a footnote: The Role Of The State And The Hierarchy Of Money.

[4] Compare this list to the list prepared by Beardsley Ruml, President of the New York Fed, in 1946.

![[Photo by Piron Guillaume via Unsplash]](https://www.emptywheel.net/wp-content/uploads/2017/07/Healthcare_PironGuillaume-Unsplash_v1.jpg)