Bret Baier’s False Claim, the Escort Service, and Former Fox News Pundit Keith Ablow

Deep into one version of what is referred to as the “Hunter Biden” “laptop,” (according to reports done for Washington Examiner by Gus Dimitrelos*) there’s a picture of a check, dated November 14, 2018, for $3,400, paid to a woman with a Slavic name. The check bears a signature that matches others, attributed to Hunter Biden, from the “laptop” also attributed to him. Along with a line crossing out Hunter’s ex-spouse’s name on the check, the check was marked on the memo line: “Blue Water Wellness” along with a word that is illegible–possibly “Rehab.”

The check appears in a chat thread, dated November 26, 2018, apparently initiated to set up tryst with an escort in New York City. Just over 12 hours after setting up that tryst, the Russian or Ukrainian woman who manages the escort service, Eva, wrote back, asking Hunter if he was in New York, because she had a problem with his check, that $3,400 check dated twelve days earlier. Hunter was effusively apologetic, and offered to pay the presumed sex worker via wire, because it’s the only way he could be 100% certain it would get to her. Shortly thereafter, he sent two transfers from his Wells Fargo account, $3,200 plus $30 fees, directly to the woman’s bank account, and $800 via Zelle drawn on Wells Fargo.

Those transfers from Hunter Biden’s Wells Fargo account to a presumed sex worker with a Slavic name took place between the day, October 31, 2018, when IRS Agent Joseph Ziegler, newly arrived on IRS’ international tax squad, launched an investigation into an international online sex business and the day, December 10, 2018, when Ziegler would piggyback off that sex business investigation to launch an investigation into Hunter Biden. The Hunter Biden investigation was initially based off a Suspicious Activity Report from Wells Fargo sent on September 21, 2018 and from there, quickly focused on Hunter’s ties to Burisma, precisely the investigation the then President was demanding.

Understand: The entire five year long investigation of Hunter Biden was based off payments involving Wells Fargo quite similar to this one, the check for $3,400 to a sex worker associated (in this case, at least) with what Dimitrelos describes as an escort service.

Research on the company yielded bank reports indicating that [Hunter Biden] made payments to a U.S. contractor, who also had received payments from that U.K. company.

Only, this particular payment — the need to wire the presumed sex worker money to cover the check — ties the escort service to one of the businesses of former Fox News pundit Keith Ablow: Blue Water Wellness, a float spa just a few blocks down the road from where Ablow’s psychiatric practice was before it got shut down amid allegations of sex abuse of patients and a DEA investigation. Emails obtained from a different version of the “laptop” show that on November 13, Blue Water Wellness sent Hunter an appointment reminder, albeit for an appointment on November 17, not November 14. That appointment reminder is the first of around nine appointment reminders at the spa during the period.

The tryst with the presumed sex worker with the Slavic name does appear to have happened overnight between November 13 and 14. Between 1:58 and 6:33AM, there were two attempts to sign into Hunter’s Venmo account from a new device, five verification codes sent to his email, and two password resets, along with the addition of the presumed sex worker to his Zelle account at Wells Fargo, which he would use to send her money over a week later. All that makes it appear like they were together, but Hunter didn’t have his phone, the phone he could use to pay her and so tried to do so from a different device. Maybe, he gave up, and simply wrote her a check, from the same account on which that Zelle account drew.

None of which explains why he appears to have written “Blue Water Wellness” on a check to pay a presumed sex worker. Maybe he was trying to cover up what he was paying for. Maybe he understood there to be a tie. Or maybe it was the advertising Blue Water did at the time.

Deep in a different part of the laptop analyzed by Dimitrelos, though, a deleted invoice shows that Hunter met with former Fox News pundit Keith Ablow on the same day as Hunter apparently wrote that check to the presumed sex worker. The deleted invoice reflects two 60-minute sessions billed by Baystate Psychiatry, the office just blocks away from the float spa.

Emails obtained from a different version of the “Hunter Biden” “laptop” show that at some point on November 26, 2018, as Hunter first arranged a tryst in New York City and then, no longer in New York, sent a wire directly from Wells Fargo to the presumed sex worker, someone accessed Hunter’s Venmo account from a new device — successfully this time — one located in Newburyport, MA, where former Fox News pundit Keith Ablow’s businesses were.

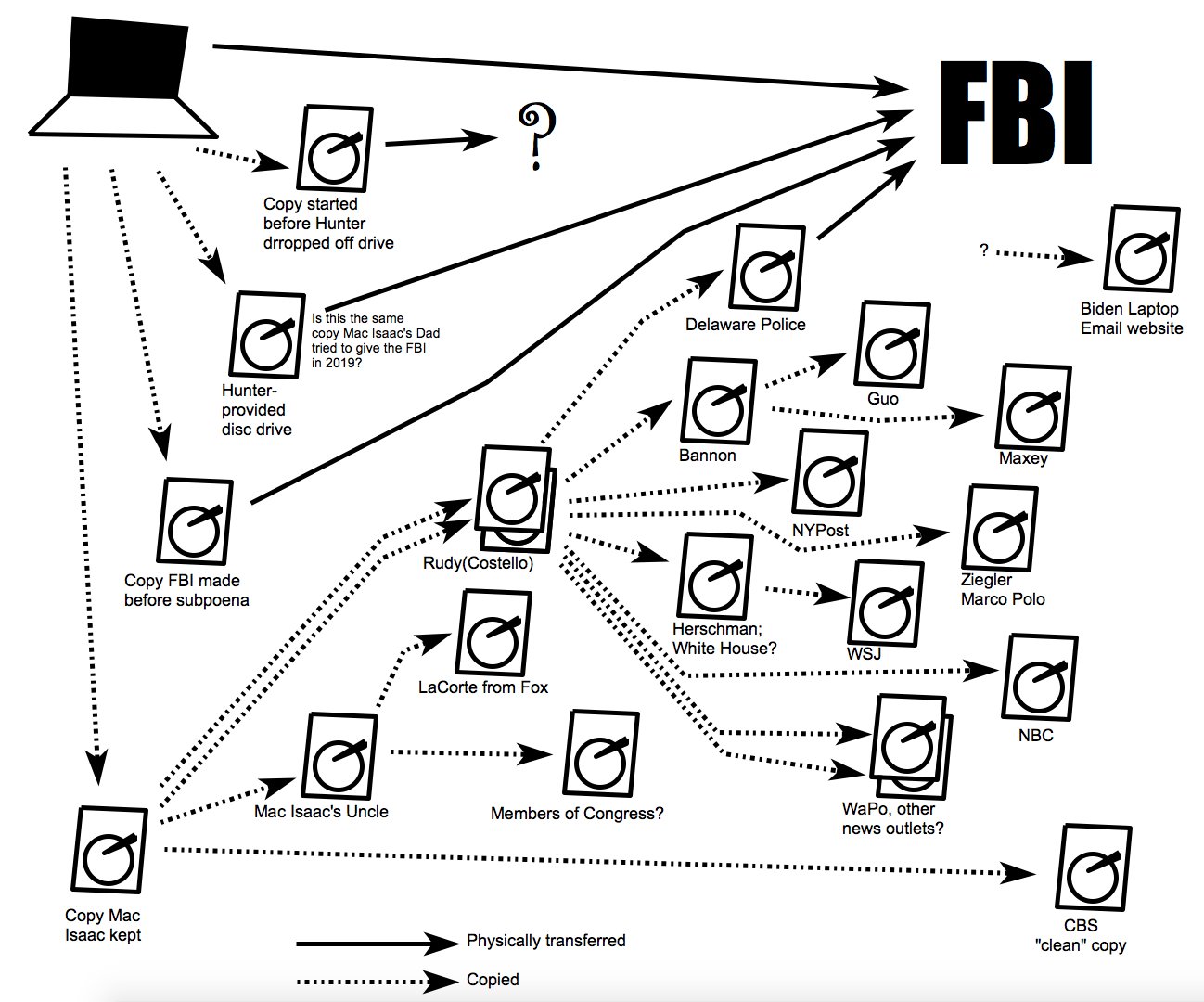

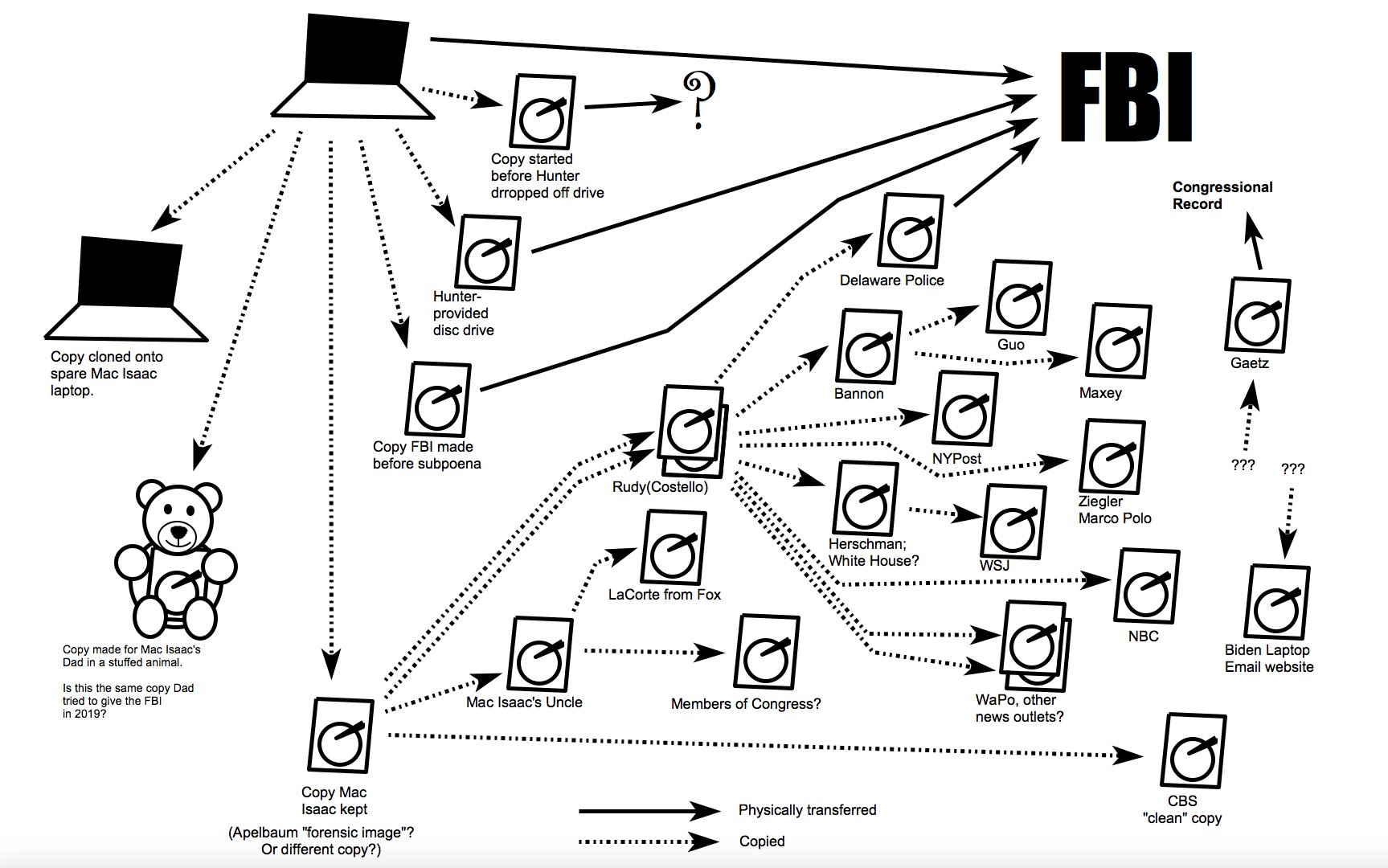

There are a number of things you’d need to do to rule out the possibility of Russian involvement in the process by which a laptop purportedly belonging to Hunter Biden showed up at the Wilmington repair shop of John Paul Mac Isaac, from there to be shared with Rudy Giuliani, who then shared it with three different Murdoch outlets and a ton of other right wing propagandists, many of them members of Congress.

One of those would be to rule out that any of the sex workers tied to this escort service had a role in compromising Hunter Biden’s digital identity, thereby obtaining credential information that would make it easy to package up a laptop that would be especially useful to those trying to destroy the life of the son of Donald Trump’s opponent. There’s no evidence that any of the sex workers were involved, but throughout 2018, there are a number of device accesses involving Hunter’s Venmo account, the iCloud account packaged up on “the laptop,” and different Google accounts — including between the day on November 13 when Hunter appears to have met the woman with the Slavic name and the date on November 26 when he wired her money — that should at least raise concerns that his digital identity had been compromised. I’ve laid out just a fraction of them in this post and this post, both of which focus on the later period when Hunter was in the care of the former Fox News pundit.

If you wanted to compromise Hunter Biden, as certain Russian-backed agents in Ukraine explicitly did, doing so via the sex workers, drug dealers, and fellow junkies he consorted with in this period would be painfully easy. Indeed, in Hunter’s book, he even described other addicts walking off with his, “watch or jacket or iPad—happened all the time.” Every single one of those iPads that walked away might include the keys to Hunter’s digital life, and as such, would be worth a tremendous amount of money to those looking to score their next fix. To rule out Russian involvement, you’d have to ID every single one of them and rule out that they were used for ongoing compromise of Hunter or, barring that, you’d have to come up with explanations, such as the likelihood that Hunter was trying to pay a sex worker but didn’t have his phone with him and so used hers, for the huge number of accesses to his accounts, especially the iCloud account ultimately packaged up.

Of course, explaining how a laptop purportedly belonging to Hunter Biden showed up at Mac Isaac’s shop would also require explaining how a laptop definitely belonging to Hunter Biden came to be left in former Fox News pundit Keith Ablow’s possession during precisely the same period when (it appears) Hunter Biden’s digital life was getting packaged up, a laptop Ablow did nothing to return to its owner and so still had when the DEA seized it.

Bret Baier lied about the Hunter Biden laptop

Given the unanswered questions about the role of a former Fox News pundit in all this, you’d think that Fox personalities would scrupulously adhere to the truth about the matter, if for no other reason than to avoid being legally implicated in any conspiracies their former colleague might have been involved with, or to avoid kicking off another expensive defamation lawsuit.

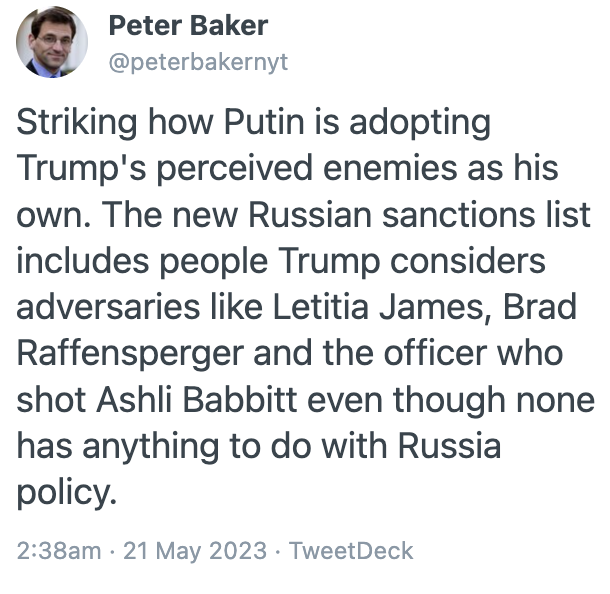

Sadly, Bret Baier couldn’t manage to stick to the truth in his attempt to sandbag former CIA Director Leon Panetta on Friday. Baier debauched the gravity of an appearance purportedly focused on the Hamas attack and aftermath, with what he must have thought was a clever gotcha question about a letter Leon Panetta signed in October 2020 stating the opinion that the emails being pitched by Murdoch outlet New York Post, “has all the classic earmarks of a Russian information operation.” The letter not only expressed an opinion, but it cited four specific data points and two observations about known Russian methods, all of which were and remain true to to this day.

And in the process, Bret Baier made a false claim.

Bret Baier made a false claim and all of Fox News’ watchers and all the other propagandists made the clip of Bret Baier making a false claim go viral, because they apparently either don’t know or don’t care that Baier couldn’t even get basic facts right. They are positively giddy that Baier used the tragedy of a terrorist attack to demonstrate his own ignorance or willful deceit about Fox’s favorite story, Hunter Biden’s dick pics.

From the get-go, Baier adopted a rhetorical move commonly used by Murdoch employees and frothy right wingers sustaining their blind faith in “the laptop:” He conflated “the laptop” with individual emails.

Baier: I’d be remiss if I didn’t ask you about that letter you signed onto from former intelligence officials saying that the laptop and the emails had all the classic earmarks of a Russian information operation. Obviously the New York Post and others saying the Hunter Biden letter was the real disinformation all along. Um, that letter was used in the debate, I haven’t asked you this. But do you have regrets about that, now looking back, knowing what you know now? [my emphasis]

The spooks’ letter Panetta signed addressed emails, not “the laptop.” The only use of the word “laptop” in the letter was in labeling this a potential “laptop op,” a way to package up emails meant to discredit Joe Biden. The letter even includes “the dumping of accurate information” among the methods used in Russian information operations.

Having conflated emails and “the laptop,” Baier then asked whether Panetta thinks “it,” now referring just to “the laptop,” not even the hard drives of copies from the laptop in question, was real.

Panetta: Well, you know, Bret, I was extremely concerned about Russian interference and misinformation. And we all know it. Intelligence agencies discovered that Russia had continued to push disinformation across the board. And my concern was to kind of alert the public to be aware that these disinformation efforts went on. And frankly, I haven’t seen any evidence from any intelligence that that was not the case.

Baier: You don’t think that it was real?

Having first conflated emails and the laptop, then substituted the laptop for the emails addressed in the letter, Baier then falsely claimed that, “Hunter Biden said it was his laptop.”

Panetta: I think that, I think that disinformation is involved here. I think Russian disinformation is part of what we’re seeing everywhere. I don’t trust the Russians. And that’s exactly why I was concerned that the public not trust the Russians either.

Baier: I don’t want to dwell on this because we have bigger things to talk about. Bigger urgency. But obviously, Hunter Biden said it was his laptop, and this investigation continues. [my emphasis]

I understand how frothy right wingers misunderstand what Hunter Biden has said about the data associated with “the laptop,” but Baier presents as a journalist, and you’d think he’d take the time to read the primary documents.

Hunter Biden admits some data is his, but denies knowledge of the “laptop”

The claim that Hunter Biden has said “the laptop” was his arises from three lawsuits: first, from Hunter Biden’s response and counterclaim to John Paul Mac Isaac’s lawsuit, then of Hunter’s lawsuit against Garrett Ziegler, and finally, the lawsuit against Rudy Giuliani.

Regarding the first of those filings, Hunter Biden based his countersuit against JPMI on an admission that JPMI came into possession of electronically stored data, at least some of which belonged to him. But he specifically did not admit that JPMI “possessed any particular laptop … belonging to Mr. Biden.”

5. In or before April 2019, Counterclaim Defendant Mac Isaac, by whatever means, came into possession of certain electronically stored data, at least some of which belonged to Counterclaim Plaintiff Biden.1

1 This is not an admission by Mr. Biden that Mac Isaac (or others) in fact possessed any particular laptop containing electronically stored data belonging to Mr. Biden. Rather, Mr. Biden simply acknowledges that at some point, Mac Isaac obtained electronically stored data, some of which belonged to Mr. Biden.

Regarding JPMI’s claims that Hunter dropped off the laptop,

169. HUNTER knowingly left his laptop with Plaintiff on April 12, 2019.

170. Soon thereafter HUNTER returned to Plaintiff’s shop to leave an external hard drive to which Plaintiff could transfer the data from HUNTER’s laptop.

171. HUNTER never returned to Plaintiff’s shop pick up his laptop

Hunter denied sufficient knowledge to answer all of them.

169. Mr. Biden is without knowledge sufficient to admit or deny the allegations in paragraph 169.

170. Mr. Biden is without knowledge sufficient to admit or deny the allegations in paragraph 170.

171. Mr. Biden admits that, if he ever had visited before, he did not return to Plaintiff’s shop.

In response to JPMI’s claim that Hunter knew of the phone call his lawyer, George Mesires, made to JPMI in October 2020 and the email follow-up that in any case doesn’t substantiate what JPMI claimed about the phone call,

31. On October 13, 2020, Plaintiff received a call from Mr. George Mesires,1 identifying himself as HUNTER’s attorney, asking if Plaintiff still had possession of his client’s laptop and following up thereafter with an email to the Plaintiff. Copy of email attached as EXHIBIT C.

[snip]

174. HUNTER’s attorney, George Mesires contacted Plaintiff on October 13, 2020 about the laptop.

Hunter admitted that Mesires was his attorney but denied knowing anything more.

31. Mr. Biden admits that Mr. George Mesires was his attorney. Mr. Biden is without knowledge sufficient to admit or deny the remaining allegations in paragraph 31.

[snip]

174. Mr. Biden admits that Mr. Mesires was his attorney. Mr. Biden is without knowledge sufficient to admit or deny the remaining allegations in paragraph 174.

In response to JPMI’s claim that Hunter Biden said something about the laptop without mentioning JPMI,

172. When asked about the laptop in a television interview broadcast around the world, HUNTER stated, “There could be a laptop out there that was stolen from me. It could be that I was hacked. It could be that it was the – that it was Russian intelligence. It could be that it was stolen from me. Or that there was a laptop stolen from me.” See https://edition.cnn.com/2021/04/02/politics/hunterbiden-laptop/index.html.

173. HUNTER knew it was his laptop.

Hunter Biden admitted he made the comment that didn’t mention JPMI — a comment on which JPMI based a $1.5M defamation claim!! — but again denied knowing whether or not the laptop was his.

172. Admitted and Mr. Biden further answers that the statement makes no mention of or even a reference to Plaintiff.

173. Mr. Biden is without knowledge sufficient to admit or deny the allegations in paragraph 173.

Of some interest, in response to JPMI’s claim that the information that appeared in the NYPost came from Hunter, who voluntarily left his laptop with JPMI,

67. The information contained in the NY POST exposé came from HUNTER who voluntarily left his laptop with the Plaintiff and failed to return to retrieve it.

Hunter outright denied the claim.

67. Denied.

Hunter Biden claimed that Rudy hacked Hunter’s data

That last claim — the outright denial that the data in the NYPost story came from Hunter — is of particular interest given something Denver Riggleman recently said. He described that the Hunter Biden team now has the data that JPMI shared with others — apparently thanks to this countersuit — and they’ve used it to compare with the data distributed forward from there.

Also, we know now, since the Hunter Biden team has the John Paul Mac Isaac data that was given to Rudy Giuliani and given to CBS, we also know that that data had no forensic chain of custody and it was not a forensic copy of any type of laptop, or even multiple devices that we can see. It was just a copy-paste of files, more or less.

[snip]

We know that there’s different data sets in different portions of the Internet attributed to Hunter’s data — or, to Hunter’s laptop.

[nip]

Now that we do have forensic data — Hunter Biden team has more foensic data than anybody else out there — we can actually start to compare and contrast. And that’s why you see the aggressiveness from the Hunter Biden legal team.

The lawsuit against Rudy and Costello claims that at some point, Rudy and Costello did things that amount to accessing Hunter’s data unlawfully. Hacking.

23. Following these communications, Mac Isaac apparently sent via FedEx a copy of the data he claimed to have obtained from Plaintiff to Defendant Costello’s personal residence in New York on an “external drive.” Once the data was received by Defendants, Defendants repeatedly “booted up” the drive; they repeatedly accessed Plaintiff’s account to gain access to the drive; and they proceeded to tamper with, manipulate, alter, damage and create “bootable copies” of Plaintiff’s data over a period of many months, if not years. 2

24. Plaintiff has discovered (and is continuing to discover) facts concerning Defendants’ hacking activities and the damages being caused by those activities through Defendants’ public statements in 2022 and 2023. During one interview, which was published on or about September 12, 2022, Defendant Costello demonstrated for a reporter precisely how Defendants had gone about illegally accessing, tampering with, manipulating and altering Plaintiff’s data:

“Sitting at a desk in the living room of his home in Manhasset, [Defendant Costello], who was dressed for golf, booted up his computer. ‘How do I do this again?’ he asked himself, as a login window popped up with [Plaintiff’s] username . . .”3

By booting up and logging into an “external drive” containing Plaintiff’s data and using Plaintiff’s username to gain access Plaintiff’s data, Defendant Costello unlawfully accessed, tampered with and manipulated Plaintiff’s data in violation of federal and state law. Plaintiff is informed and believes and thereon alleges that Defendants used similar means to unlawfully access Plaintiff’s data many times over many months and that their illegal hacking activities are continuing to this day.

[snip]

26. For example, Defendant Costello has stated publicly that, after initially accessing the data, he “scrolled through the laptop’s [i.e., hard drive’s] email inbox” containing Plaintiff’s data reflecting thousands of emails, bank statements and other financial documents. Defendant Costello also has admitted publicly that he accessed and reviewed Plaintiff’s data reflecting what he claimed to be “the laptop’s photo roll,” including personal photos that, according to Defendant Costello himself, “made [him] feel like a voyeur” when he accessed and reviewed them.

27. By way of further example, Defendant Costello has stated publicly that he intentionally tampered with, manipulated, and altered Plaintiff’s data by causing the data to be “cleaned up” from its original form (whatever this means) and by creating “a number of new [digital] folders, with titles like ‘Salacious Pics’ and ‘The Big Guy.’” Neither Mac Issac nor Defendants have ever claimed to use forensically sound methods for their hacking activities. Not surprisingly, forensic experts who have examined for themselves copies of data purportedly obtained from Plaintiff’s “laptop” (which data also appears to have been obtained at some point from Mac Isaac) have found that sloppy or intentional mishandling of the data damaged digital records, altered cryptographic featuresin the data, and reduced the forensic quality of data to “garbage.”

2 Plaintiff’s investigation indicates that the data Defendant Costello initially received from Mac Isaac was incomplete, was not forensically preserved, and that it had been altered and tampered with before Mac Issac delivered it to Defendant Costello; Defendant Costello then engaged in forensically unsound hacking activities of his own that caused further alterations and additional damage to the data he had received. Discovery is needed to determine exactly what data of Plaintiff Defendants received, when they received it, and the extent to which it was altered, manipulated and damaged both before and after receipt.

3 Andrew Rice & Olivia Nuzzi, The Sordid Saga of Hunter Biden’s Laptop, N.Y. MAG. (Sept. 12, 2022), https://nymag.com/intelligencer/article/hunter-biden-laptop- investigation.html.

I don’t think Hunter’s team would have compared the data Rudy shared with the NYPost before Hunter denied, outright, that “The information contained in the NY POST exposé came from HUNTER.” But based on what Riggleman claimed, they have since, and did compare it, before accusing Rudy and a prominent NY lawyer of hacking Hunter Biden’s data.

Hunter Biden’s team admits they don’t know the precise timing of this: “the precise timing and manner by which Defendants obtained Plaintiff’s data remains unknown to Plaintiff.” DDOSecrets points to several emails that suggest Rudy and Costello did more than simply review available data, however. For example, it points to this email created on September 2, 2020, just after the former President’s lawyer got the hard drive.

September 2, 2020: A variation of a Burisma email from 2016 is created and added to the cache. The email and file metadata both indicate it was created on September 2, 2020.

But the lawsuit, if proven, suggests the possibility that between the time JPMI shared the data with Rudy and the time Rudy shared it with NYPost, Rudy may have committed federal violations of the Computer Federal Fraud and Abuse Act — that is, Hunter alleges that between the time JPMI shared the data and the time NYPost published derivative data, Rudy may have hacked Hunter Biden’s data.

If he could prove that, it means the basis Twitter gave for throttling the NYPost story in October 2020 — they suspected the story included materials that violated Twitter’s then prohibition on publishing hacked data — would be entirely vindicated.

For example, on October 14th, 2020, the New York Post tweeted articles about Hunter Biden’s laptop with embedded images that look like they may have been obtained through hacking. In 2018, we had developed a policy intended to, to prevent Twitter from becoming a dumping ground for hacked materials. We applied this policy to the New York Post tweets and blocked links to the articles embedding those source materials. At no point did Twitter otherwise prevent tweeting, reporting, discussing or describing the contents of Mr. Biden’s laptop.

[snip]

My team and I exposed hundreds of thousands of these accounts from Russia, but also from Iran, China and beyond. It’s a concern with these foreign interference campaigns that informed Twitter’s approach to the Hunter Biden laptop story. In 2020, Twitter noticed activity related to the laptop that at first glance bore a lot of similarities to the 2016 Russian hack and leak operation targeting the dnc, and we had to decide what to do, and in that moment with limited information, Twitter made a mistake under the distribution of hacked material policy.

If Hunter can prove that — no matter what happened in the process of packaging up this data before it got to JPMI, whether it involved the compromise of Hunter’s digital identity before JPMI got the data, which itself would have been a hack that would also vindicate Twitter’s throttling of the story — it would mean all the data that has been publicly released is downstream from hacking.

For Twitter, it wouldn’t matter whether the data was hacked by Russia or by Donald Trump’s personal lawyer, it would still violate the policy as it existed at the time.

Importantly, this remains a claim about data, not about a laptop. The lawsuit against Rudy and Costello repeats the claim made in the JPMI counterclaim: while JPMI had data, some of which belongs to Hunter, Hunter is not — contrary to Bret Baier’s false claim — admitting that, “Hunter Biden said it was his laptop.”

2. Defendants themselves admit that their purported possession of a “laptop” is in fact not a “laptop” at all. It is, according to their own public statements, an “external drive” that Defendants were told contained hundreds of gigabytes of Plaintiff’s personal data. At least some of the data that Defendants obtained, copied, and proceeded to hack into and tamper with belongs to Plaintiff.1

1 This is not an admission by Plaintiff that John Paul Mac Isaac (or others) in fact possessed any particular laptop containing electronically stored data belonging to Plaintiff. Rather, Plaintiff simply acknowledges that at some point, Mac Isaac obtained electronically stored data, some of which belonged to Plaintiff.

In two lawsuits, Hunter Biden explicitly said that he was not admitting what Baier falsely claimed he had.

I know this is Fox News, but Baier just blithely interrupted a sober discussion about a terrorist attack to make a false claim about “the laptop.”

Hunter Biden claims that Garrett Ziegler hacked Hunter’s iPhone

Hunter Biden’s approach is different in the Garrett Ziegler lawsuit, in which he notes over and over that Ziegler bragged about accessing something he claimed to be Hunter Biden’s laptop, but which was really, “a hard drive that Defendants claim to be of Plaintiff’s ‘laptop’ computer.” By the time things got so far downstream to Ziegler, there was no pretense this was actually a laptop, no matter what Baier interrupted a discussion about terrorism to falsely claim.

But that paragraph explicitly denying admission about this being a laptop is not in the Ziegler suit.

There’s a likely reason for that. The core part of the claim against Ziegler is that Ziegler unlawfully accessed a real back-up of Hunter Biden’s iPhone, which was stored in encrypted form in iTunes — just as I laid out had to have happened months before that lawsuit.

28. Plaintiff further is informed and believes and thereon alleges that at least some of the data that Defendants have accessed, tampered with, manipulated, damaged and copied without Plaintiff’s authorization or consent originally was stored on Plaintiff’s iPhone and backed-up to Plaintiff’s iCloud storage. On information and belief, Defendants gained their unlawful access to Plaintiff’s iPhone data by circumventing technical or code-based barriers that were specifically designed and intended to prevent such access.

29. In an interview that occurred in or around December 2022, Defendant Ziegler bragged that Defendants had hacked their way into data purportedly stored on or originating from Plaintiff’s iPhone: “And we actually got into [Plaintiff’s] iPhone backup, we were the first group to do it in June of 2022, we cracked the encrypted code that was stored on his laptop.” After “cracking the encrypted code that was stored on [Plaintiff’s] laptop,” Defendants illegally accessed the data from the iPhone backup, and then uploaded Plaintiff’s encrypted iPhone data to their website, where it remains accessible to this day. It appears that data that Defendants have uploaded to their website from Plaintiff’s encrypted “iPhone backup,” like data that Defendants have uploaded from their copy of the hard drive of the “Biden laptop,” has been manipulated, tampered with, altered and/or damaged by Defendants. The precise nature and extent of Defendants’ manipulation, tampering, alteration, damage and copying of Plaintiff’s data, either from their copy of the hard drive of the claimed “Biden laptop” or from Plaintiff’s encrypted “iPhone backup” (or from some other source), is unknown to Plaintiff due to Defendants’ continuing refusal to return the data to Plaintiff so that it can be analyzed or inspected. [my emphasis]

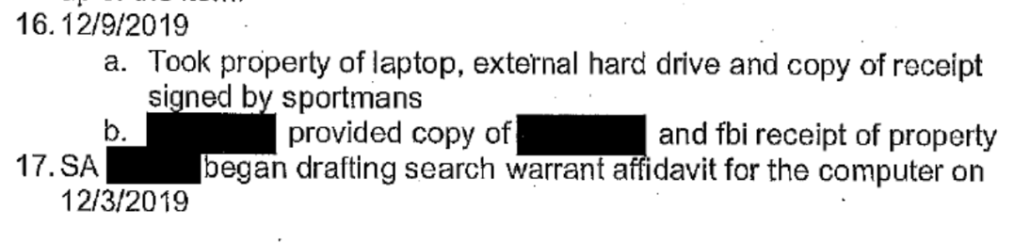

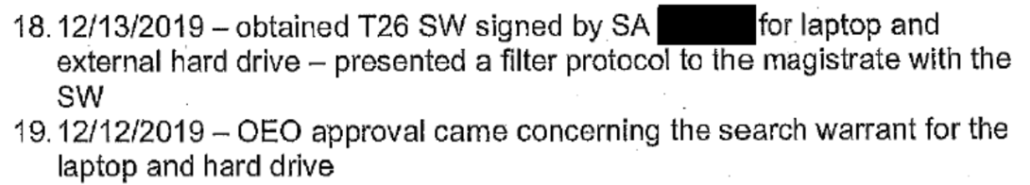

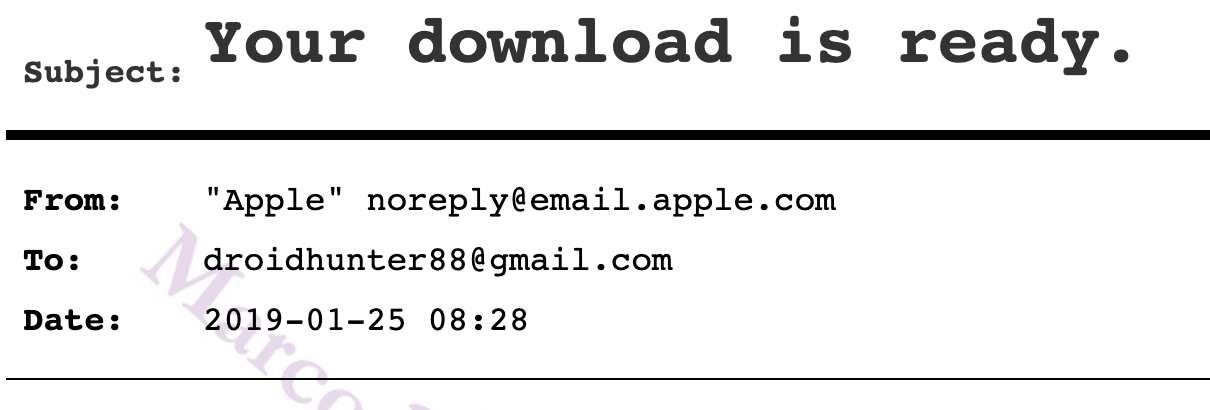

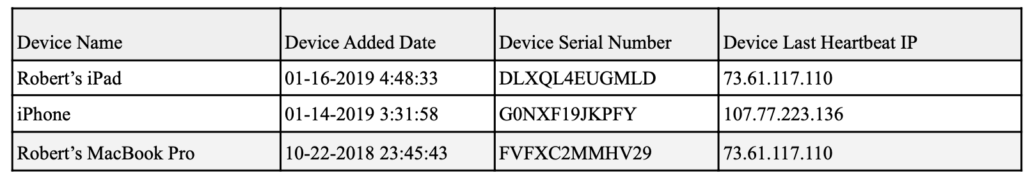

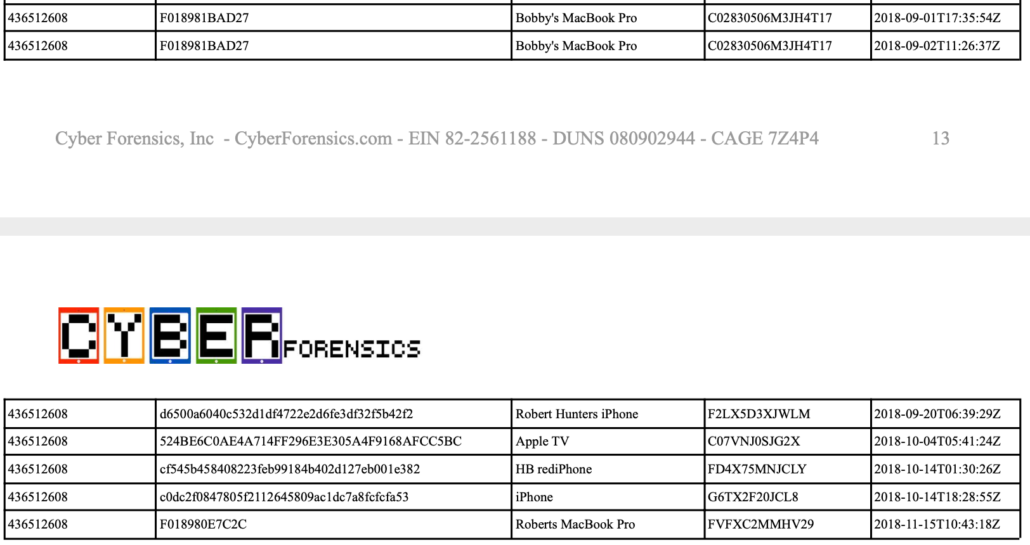

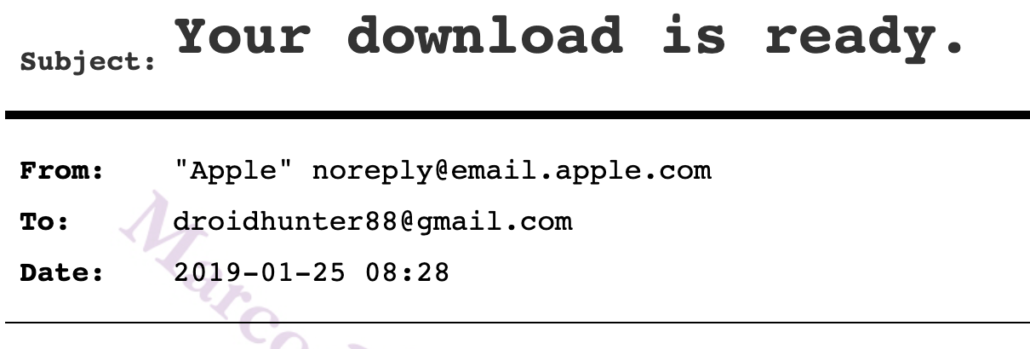

Hunter Biden’s team has backup for this assertion, thanks to the notes Gary Shapley took in an October 22, 2022 meeting about what was an actual laptop JPMI handed over to the FBI. On that laptop — which the FBI had confirmed was associated with Hunter Biden’s iCloud account and which it tied to data that could all be falsifiable to someone in possession of the laptop, which had means to intercept and redirect emails and calls to Hunter’s real devices, but which the FBI still had not validated 10 months after obtaining it — the iPhone content was encrypted.

Laptop — iphone messages were on the hard drive but encrypted they didn’t get those messages until they looked at laptop and found a business card with the password on it so they were able to get into the iphone messages [my emphasis]

Even the FBI needed to find a password to access the iPhone content that Ziegler has bragged about accessing. (Note: there have been four known accesses to this data, and every single one of them claims to have used a different means to break the encryption, which in my mind raises real questions about the nature of the business card). But the FBI had a warrant. Ziegler did not.

There are still a great deal of questions one would have to answer before entirely ruling out that Russians were involved in the process of packaging up Hunter Biden’s digital identity; the possible role of a Russian escort service is only one of at least three possible ways Russia might be involved. Yet Bret Baier is unwilling to pursue those questions — starting with the unanswered questions about the role that Baier’s former Fox News colleague played.

But with all those unanswered questions, Baier was nevertheless willing to interrupt a discussion about terrorism to make false claims about what is known.

Update: I’ve taken out that this was specifically a Russian escort service. Some outlets claim Eva is Ukrainian. Dimitrelos does claim that Hunter searched for “Russian escort service,” though.

Update: Added the Bluewater Wellness Intramuscular Injection ad from October 2018.

Update: Added the observation about a newly created email from DDOSecrets.

Update: I was reminded of Bret Baier’s opinion in the same days when Leon Panetta was expressing his doubts about this story.

During a panel on his Thursday evening show, Baier addressed the Post‘s story and the decision by both Twitter and Facebook to limit sharing of the story on their respective platforms because of concerns about spreading misinformation. The move elicited fierce pushback from conservatives and sparked a vote on a Congressional subpoena of Twitter CEO Jack Dorsey.

“The Biden campaign says the meeting never happened, it wasn’t on the schedules, they say,” Baier noted. “And the email itself says ‘set up’ for a meeting” instead of discussing an actual meeting.

Baier then played an audio clip from a SiriusXM radio interview of Giuliani, where he appeared to alter the original details of who dropped off the laptop from which the emails in question were purportedly obtained. The computer store owner who gave a copy of the laptop’s hard drive to Giuliani was also heard explaining how he is legally blind and couldn’t for certain identify just who delivered the computer to him.

” Let’s say, just not sugarcoat it. The whole thing is sketchy,” Baier acknowledged. “You couldn’t write this script in 19 days from an election, but we are digging into where this computer is and the emails and the authenticity of it.”

Featured image courtesy of Thomas Fine.

*As I have noted in the past, Dimitrelos prohibited me from republishing his reports unless I indemnify him for the privacy violations involved. I have chosen instead — and am still attempting — to get permission from Hunter Biden’s representatives to reproduce redacted parts of this report that strongly back Hunter’s claim of being hacked.