Update: I’m republishing this and bumping it, because Trump just replaced Glenn Fine as Acting Inspector General — whom Michael Horowitz had named to head the Pandemic Response Accountability Committee — with the IG for EPA. This makes him ineligible to head PRAC. Fine will remain Principle Deputy IG.

Late last night, President Trump fired the Intelligence Community Inspector General Michael Atkinson, the Inspector General who alerted Congress of the whistleblower complaint that led to Trump’s impeachment. Trump effectively put Atkinson on administrative leave for 30 days in a move that skirts the legal requirement that an inspector general be fired for cause and Congress be notified of it.

Trump has been accused of firing Atkinson late at night on a Friday under cover of the pandemic to retaliate for the role Atkinson had — which consisted of nothing more than doing his job as carefully laid out by law — in Trump’s impeachment. It no doubt is.

But it’s also likely about the pandemic and Trump’s proactive attempts to avoid any accountability for his failures in both the pandemic response and the reconstruction from it.

There were a lot of pandemic warnings Trump ignored that he wants to avoid becoming public

I say that, first of all, because of the likelihood that Trump will need to cover up what intelligence he received, alerting him to the severity of the coming pandemic. Trump’s administration was warned by the intelligence community no later than January 3, and a month later, that’s what a majority of Trump’s intelligence briefings consisted of. But Trump didn’t want to talk about it, in part because he didn’t believe the intelligence he was getting.

At a White House briefing Friday, Health and Human Services Secretary Alex Azar said officials had been alerted to the initial reports of the virus by discussions that the director of the Centers for Disease Control and Prevention had with Chinese colleagues on Jan. 3.

The warnings from U.S. intelligence agencies increased in volume toward the end of January and into early February, said officials familiar with the reports. By then, a majority of the intelligence reporting included in daily briefing papers and digests from the Office of the Director of National Intelligence and the CIA was about covid-19, said officials who have read the reports.

[snip]

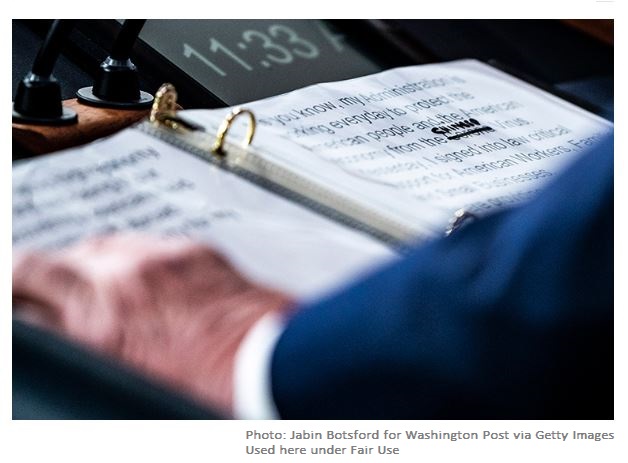

Inside the White House, Trump’s advisers struggled to get him to take the virus seriously, according to multiple officials with knowledge of meetings among those advisers and with the president.

Azar couldn’t get through to Trump to speak with him about the virus until Jan. 18, according to two senior administration officials. When he reached Trump by phone, the president interjected to ask about vaping and when flavored vaping products would be back on the market, the senior administration officials said.

On Jan. 27, White House aides huddled with then-acting chief of staff Mick Mulvaney in his office, trying to get senior officials to pay more attention to the virus, according to people briefed on the meeting. Joe Grogan, the head of the White House Domestic Policy Council, argued that the administration needed to take the virus seriously or it could cost the president his reelection, and that dealing with the virus was likely to dominate life in the United States for many months.

Mulvaney then began convening more regular meetings. In early briefings, however, officials said Trump was dismissive because he did not believe that the virus had spread widely throughout the United States.

In that same period, Trump was demanding Department of Health and Human Service Secretary Alex Azar treat coronavirus briefings as classified.

The officials said that dozens of classified discussions about such topics as the scope of infections, quarantines and travel restrictions have been held since mid-January in a high-security meeting room at the Department of Health & Human Services (HHS), a key player in the fight against the coronavirus.

Staffers without security clearances, including government experts, were excluded from the interagency meetings, which included video conference calls, the sources said.

“We had some very critical people who did not have security clearances who could not go,” one official said. “These should not be classified meetings. It was unnecessary.”

The sources said the National Security Council (NSC), which advises the president on security issues, ordered the classification.”This came directly from the White House,” one official said.

Now, it could be that this information was legitimately classified. But if so, it means Trump had even more — and higher quality — warning of the impending pandemic than we know. If not, then it was an abuse of the classification process in an attempt to avoid having to deal with it. Either one of those possibilities further condemns Trump’s response.

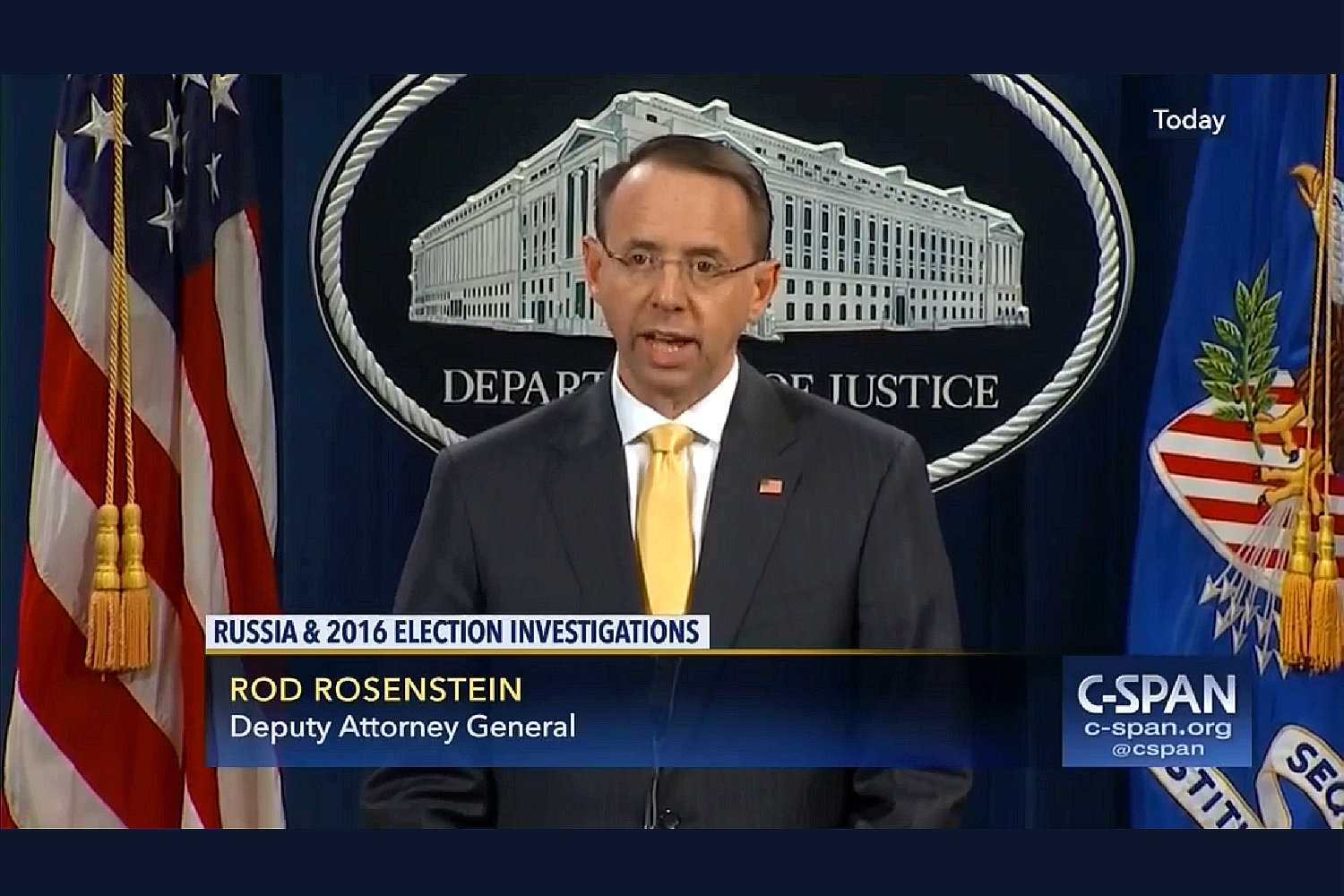

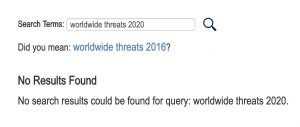

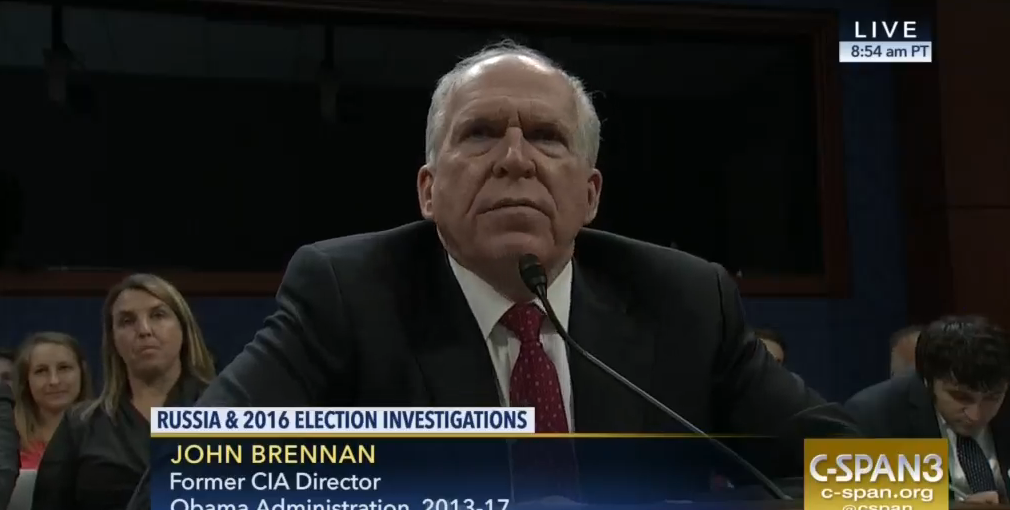

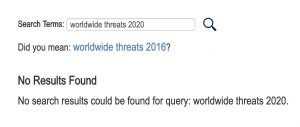

Also in this same period, then Director of National Intelligence Joseph Maguire was asking not to hold a public Worldwide Threats hearing because doing so would amount to publicly reporting on facts that the President was in denial about.

The U.S. intelligence community is trying to persuade House and Senate lawmakers to drop the public portion of an annual briefing on the globe’s greatest security threats — a move compelled by last year’s session that provoked an angry outburst from President Donald Trump, multiple sources told POLITICO.

Officials from the Office of the Director of National Intelligence, on behalf of the larger clandestine community, don’t want agency chiefs to be seen on-camera as disagreeing with the president on big issues such as Iran, Russia or North Korea, according to three people familiar with preliminary negotiations over what’s known as the Worldwide Threats hearing.

The request, which is unlikely to be approved, has been made through initial, informal conversations at the staff level between Capitol Hill and the clandestine community, the people said.

Not only did that hearing never happened, but neither has a report been released.

Among the things then Director of National Intelligence Dan Coats warned of in last year’s hearing was the threat of a pandemic.

We assess that the United States and the world will remain vulnerable to the next flu pandemic or large-scale outbreak of a contagious disease that could lead to massive rates of death and disability, severely affect the world economy, strain international resources, and increase calls on the United States for support. Although the international community has made tenuous improvements to global health security, these gains may be inadequate to address the challenge of what we anticipate will be more frequent outbreaks of infectious diseases because of rapid unplanned urbanization, prolonged humanitarian crises, human incursion into previously unsettled land, expansion of international travel and trade, and regional climate change.

So to some degree, Trump has to make sure there’s no accountability in the intelligence community because if there is, his failure to prepare for the pandemic will become all the more obvious.

Richard Burr is incapable of defending the Intelligence Community right now

But it’s also the case that the pandemic — and the treatment of early warnings about it — may have created an opportunity to retaliate against Atkinson when he might not have otherwise been able to. Even beyond offering cover under the distraction of thousands of preventable deaths, the pandemic, and Senate Intelligence Committee Chair Richard Burr’s success at profiting off it, means that the only Republican who might have pushed back against this action is stymied.

On Sunday, multiple outlets reported that DOJ is investigating a series of stock trades before most people understood how bad the pandemic would be. Burr is represented by former Criminal Division head Alice Fisher — certainly the kind of lawyer whose connections and past white collar work would come in handy for someone trying to get away with corruption.

The Justice Department has started to probe a series of stock transactions made by lawmakers ahead of the sharp market downturn stemming from the spread of coronavirus, according to two people familiar with the matter.

The inquiry, which is still in its early stages and being done in coordination with the Securities and Exchange Commission, has so far included outreach from the FBI to at least one lawmaker, Sen. Richard Burr, seeking information about the trades, according to one of the sources.

[snip]

Burr, the North Carolina Republican who heads the Senate Intelligence Committee, has previously said that he relied only on public news reports as he decided to sell between $628,000 and $1.7 million in stocks on February 13. Earlier this month, he asked the Senate Ethics Committee to review the trades given “the assumption many could make in hindsight,” he said at the time.

There’s no indication that any of the sales, including Burr’s, broke any laws or ran afoul of Senate rules. But the sales have come under fire after senators received closed-door briefings about the virus over the past several weeks — before the market began trending downward. It is routine for the FBI and SEC to review stock trades when there is public question about their propriety.

In a statement Sunday to CNN, Alice Fisher, a lawyer for Burr, said that the senator “welcomes a thorough review of the facts in this matter, which will establish that his actions were appropriate.”

“The law is clear that any American — including a Senator — may participate in the stock market based on public information, as Senator Burr did. When this issue arose, Senator Burr immediately asked the Senate Ethics Committee to conduct a complete review, and he will cooperate with that review as well as any other appropriate inquiry,” said Fisher, who led the Justice Department’s criminal division under former President George W. Bush.

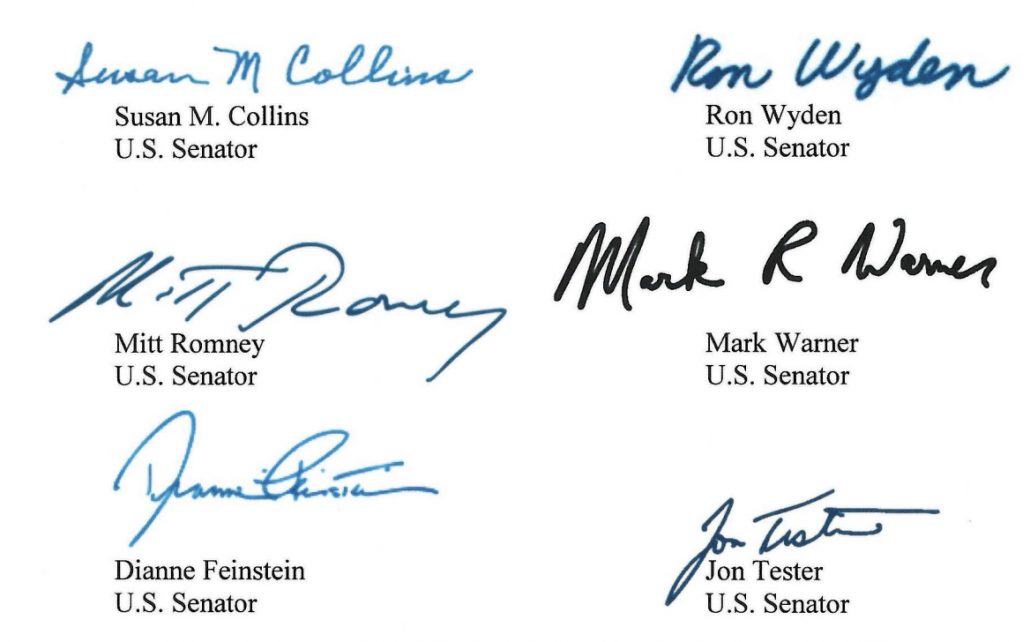

In spite of Fisher’s bravado, Burr is by far the most legally vulnerable of the senators who dumped a lot of stock in the period. That’s partly because he had access to two streams of non-public reporting on the crisis, the most classified on SSCI (which Senator Feinstein also would have had), but also on the Health, Education, Labor, and Pensions committee. And unlike the other senators, Burr admitted that he made these trades himself.

Again, in spite of Fisher’s claims, Burr will be forced to affirmatively show that he didn’t rely on this non-public information when dumping an inordinate amount of stock.

All of which is to say that Burr may be hoping that Fisher can talk him out of any legal exposure, which will require placating the thoroughly corrupt Bill Barr.

I had already thought that Trump might use this leverage to influence the findings or timing of the remaining parts of SSCI’s Russian investigation. That’s all the more true of Atkinson’s firing. Thus far, Burr has remained silent on what is obviously a legally inappropriate firing.

Even as he fired Atkinson, Trump undermined any oversight of his pandemic recovery efforts

A week before firing Atkinson, Trump made it clear he had no intention of being bound by Inspectors General in his signing statement for the “CARES Act” recovery bill. In addition to stating that Steve Mnuchin could reallocate spending without prior notice to Congress (as required by the bill and the Constitution), Trump also undercut both oversight mechanisms in the law. He did so by suggesting that the Chairperson of Council of the Inspectors General on Integrity and Efficiency (who is DOJ’s Inspector General Michael Horowitz) should not be required to consult with Congress about who he should make Director and Deputy Director of the Pandemic Response Accountability Committee.

Section 15010(c)(3)(B) of Division B of the Act purports to require the Chairperson of the Council of the Inspectors General on Integrity and Efficiency to consult with members of the Congress regarding the selection of the Executive Director and Deputy Executive Director for the newly formed Pandemic Response Accountability Committee. The Committee is an executive branch entity charged with conducting and coordinating oversight of the Federal Government’s response to the coronavirus outbreak. I anticipate that the Chairperson will be able to consult with members of the Congress with respect to these hiring decisions and will welcome their input. But a requirement to consult with the Congress regarding executive decision-making, including with respect to the President’s Article II authority to oversee executive branch operations, violates the separation of powers by intruding upon the President’s power and duty to supervise the staffing of the executive branch under Article II, section 1 (vesting the President with the “executive Power”) and Article II, section 3 (instructing the President to “take Care” that the laws are faithfully executed). Accordingly, my Administration will treat this provision as hortatory but not mandatory.

On Monday, Horowitz named DOD Acting Inspector General Glenn Fine Director of PRAC.

In appointing Mr. Fine to Chair the PRAC, Mr. Horowitz stated, “Mr. Fine is uniquely qualified to lead the Pandemic Response Accountability Committee, given his more than 15 years of experience as an Inspector General overseeing large organizations — 11 years as the Department of Justice Inspector General and the last 4 years performing the duties of the Department of Defense Inspector General. The Inspector General Community recognizes the need for transparency surrounding, and strong and effective independent oversight of, the federal government’s spending in response to this public health crisis. Through our individual offices, as well as through CIGIE and the Committee led by Mr. Fine, the Inspectors General will carry out this critical mission on behalf of American taxpayers, families, businesses, patients, and health care providers.”

Last night, however, after years of leaving DOD’s IG position vacant, Trump nominated someone who has never managed the an office like DOD’s Inspector General, which oversees a budget larger than that of many nation-states, and who is currently at the hyper-politicized Customs and Border Patrol.

Jason Abend of Virginia, to be Inspector General, Department of Defense.

Mr. Abend currently serves as Senior Policy Advisor, United States Customs and Border Protection.

Prior to his current role, Mr. Abend served in the Federal Housing Finance Agency’s Office of Inspector General as a Special Agent. Before that, he served as a Special Agent in the Department of Housing and Urban Development’s Office of Inspector General, where he led a team investigating complex Federal Housing Administration mortgage and reverse mortgage fraud, civil fraud, public housing assistance fraud, and internal agency personnel cases.

Mr. Abend was also the Founder and CEO of the Public Safety Media Group, LLC, a professional services firm that provided strategic and operational human resources consulting, training, and advertising to Federal, State, and local public safety agencies, the United States Military, and Intelligence agencies.

Earlier in his career, Mr. Abend worked as a Special Agent at the United States Secret Service and as an Intelligence Research Specialist at the Federal Bureau of Investigation.

Mr. Abend received his bachelor’s degree from American University and has received master’s degrees from both American University and George Washington University.

Abend seems totally unqualified for the DOD job alone, but if he is confirmed, he would also make Fine ineligible to head PRAC.

Horowitz issued a statement on Atkinson’s firing today that emphasized that Atkinson had acted appropriately with the Ukraine investigation, as well as his intent to conduct rigorous oversight, including — perhaps especially — PRAC.

Inspector General Atkinson is known throughout the Inspector General community for his integrity, professionalism, and commitment to the rule of law and independent oversight. That includes his actions in handling the Ukraine whistleblower complaint, which the then Acting Director of National Intelligence stated in congressional testimony was done “by the book” and consistent with the law. The Inspector General Community will continue to conduct aggressive, independent oversight of the agencies that we oversee. This includes CIGIE’s Pandemic Response Accountability Committee and its efforts on behalf of American taxpayers, families, businesses, patients, and health care providers to ensure that over $2 trillion dollars in emergency federal spending is being used consistently with the law’s mandate.

Also in last week’s signing statement, Trump said he would not permit an Inspector General appointed to oversee the financial side of the recovery to report to Congress when Treasury refuses to share information.

Section 4018 of Division A of the Act establishes a new Special Inspector General for Pandemic Recovery (SIGPR) within the Department of the Treasury to manage audits and investigations of loans and investments made by the Secretary of the Treasury under the Act. Section 4018(e)(4)(B) of the Act authorizes the SIGPR to request information from other government agencies and requires the SIGPR to report to the Congress “without delay” any refusal of such a request that “in the judgment of the Special Inspector General” is unreasonable. I do not understand, and my Administration will not treat, this provision as permitting the SIGPR to issue reports to the Congress without the presidential supervision required by the Take Care Clause, Article II, section 3.

That may not matter now, because Trump just nominated one of the lawyers who just helped him navigate impeachment for that SIGPR role.

Brian D. Miller of Virginia, to be Special Inspector General for Pandemic Recovery, Department of the Treasury.

Mr. Miller currently serves as Special Assistant to the President and Senior Associate Counsel in the Office of White House Counsel. Prior to his current role, Mr. Miller served as an independent corporate monitor and an expert witness. He also practiced law in the areas of ethics and compliance, government contracts, internal investigations, white collar, and suspension and debarment. Mr. Miller has successfully represented clients in government investigations and audits, suspension and debarment proceedings, False Claims Act, and criminal cases.

Mr. Miller served as the Senate-confirmed Inspector General for the General Services Administration for nearly a decade, where he led more than 300 auditors, special agents, attorneys, and support staff in conducting nationwide audits and investigations. As Inspector General, Mr. Miller reported on fraud, waste, and abuse, most notably with respect to excesses at a GSA conference in Las Vegas.

Mr. Miller also served in high-level positions within the Department of Justice, including as Senior Counsel to the Deputy Attorney General and as Special Counsel on Healthcare Fraud. He also served as an Assistant United States Attorney in the Eastern District of Virginia, where he handled civil fraud, False Claims Act, criminal, and appellate cases.

Mr. Miller received his bachelor’s degree from Temple University, his juris doctorate from the University of Texas, and his Master of Arts from Westminster Theological Seminary.

To be fair, unlike Abend, Miller is absolutely qualified for the SIGPR position (which means he’ll be harder to block in the Senate). But by picking someone who has already demonstrated his willingness to put loyalty ahead of the Constitution, Trump has provided Mnuchin one more assurance that he can loot the bailout with almost no oversight.