Last Wednesday, majority staffers for the Senate Finance and Homeland Security Committees wrote Chuck Grassley and Ron Johnson a memo that purports to update those Committee chairs of the status of an investigation into — well, the purpose of the investigation is actually not clear, but ultimately it’s an investigation designed to keep hopes of finding some smoking gun in Hillary’s servers that several other investigations haven’t found, an investigation that Grassley has been pursuing for four years.

As the memo describes, the most recent steps in this “investigation” involve some interviews that were completed and all related backup documentation obtained in April, four months ago.

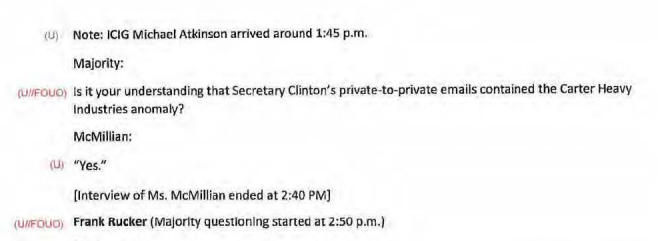

We pursued this issue by requesting interviews with the two ICIG officials. On December 4, 2018, your staff, along with staff from Senators Feinstein and McCaskill, interviewed ICIG employees Mr. Rucker and Ms. McMillian. On December 20, 2018, you transmitted a copy of an interview summary of the Majority’s questions and the witness’s answers to the ICIG for a classification review. On January 30, 2019, the ICIG provided classified and unclassified versions of the interview summary, and the Office of Senate Security redacted the classified information. On February 28, 2019, the ICIG provided documentary evidence including copies of emails and notes from meetings. On April 9, 2019, the DOJ IG and ICIG provided a summary of their findings related to these Chinese hacking allegations.

The staffers use these investigative steps, completed four months ago, to make two insinuations: that State tried to classify Hillary’s emails as deliberative rather than classified (something long known, and easily explained by the known debate over retroactive classification for the emails).

In addition, the staffers report that one but not a second Intelligence Committee Inspector General employee remarked that FBI Agents seemed non-plussed by their concerns that China had hacked Hillary. The description of that claim in the topline of the memo drops Peter Strzok’s name as its hook.

[A]ccording to one ICIG official, some members of the FBI investigative team seemed indifferent to evidence of a possible intrusion by a foreign adversary into Secretary Clinton’s non-government server. The interview summary makes clear exactly what information Mr. Rucker and Ms. McMillian knew regarding the alleged hack of the Clinton server, as well as the information they shared with the FBI team, including Peter Strzok, the Deputy Assistant Director of the FBI’s Counterintelligence Division in charge of the Clinton investigation.

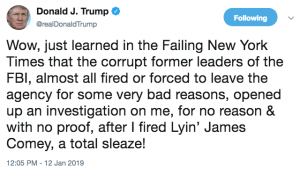

Wow, that Peter Strzok is some devious asshole, showing no concern about Hillary being hacked by a foreign government, huh? Presumably, that’s the headline the taxpayer funded staffers wanted: BREAKING Peter Strzok doesn’t care about foreign hacking or State trying to protect Hillary.

To the credit of press outlets that did cover this report, they did get what the more relevant conclusion to these documents is: After spending a year double-checking the work of the FBI, these Senate staffers found that the FBI was right when it said it had found no evidence Hillary’s server had been hacked.

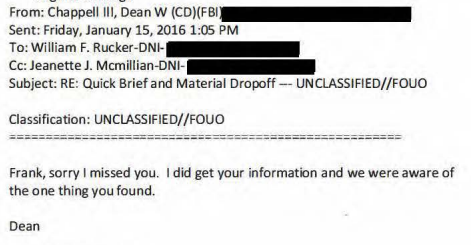

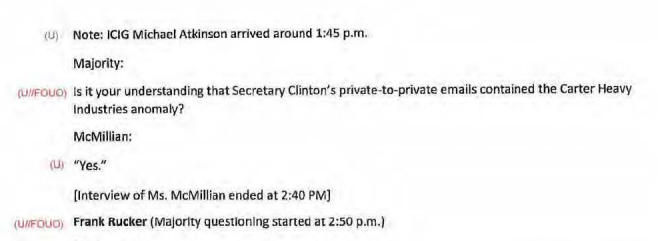

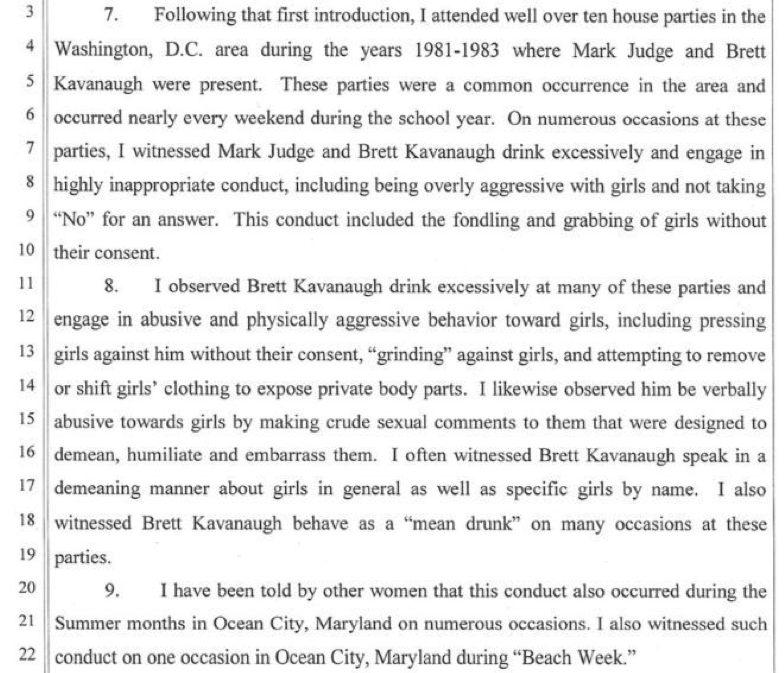

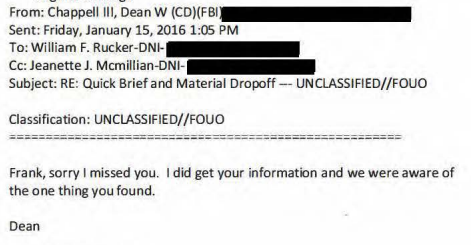

What the backup actually shows is that an ICIG Inspector, Phil Rucker, found an “anomaly” while reviewing Hillary Clinton’s emails, an unknown Gmail for a company called Carter Heavy Industries in her email headers, which he thought could have been used to steal her emails as sent. At a meeting largely designed to explain the ICIG efforts to review Hillary’s email for classified information to Strzok, who had just been promoted to the DAD position at FBI a week earlier, Rucker shared what he found with Strzok and the FBI agent he had already been liaising with, Dean Chappell. The FBI already knew of it, and that same day would confirm the explanation: that tech contractor Paul Combetta had used a dummy email to copy over Hillary’s emails as he migrated Hillary’s email onto a Platte River server.

When interviewed about all this three years later, after Peter Strzok had become the villain in Donald Trump’s Deep State coup conspiracy, Rucker accused Strzok of being “aloof and dismissive” of his concerns.

Mr. Rucker said that Mr. Chappell was normal and professional as he had come to know him to be, but that he didn’t know anything about Mr. Strzok prior to the meeting. Mr. Rucker said that Mr. Strzok seemed to be “aloof and dismissive.” He said it was as if Mr. Strzok felt dismissive of the relationship between the FBI and ICIG and he was not very warm. He said that Mr. Strzok didn’t ask many questions including any about SAP related issues. He said the meeting lasted approximately 30 to 60 minutes and that only people from the FBI attended; there were no employees from DOJ. Mr. Rucker said that he knows that an FBI attorney was present, but he cannot remember the person’s name or even whether it was a man or a woman.

[snip]

Mr. Rucker said that he discussed SAP with the FBI. He said he discussed another of Secretary Clinton’s emails that they were never able to quite figure out. He said he verbally presented this information to Mr. Strzok which lasted only for a minute or so. He said that he doesn’t think he mentioned Carter Heavy Industries by name, but only the appearance of a Gmail address that seemed odd. He said that Mr. Strzok seemed “nonplused” by the info, and that he didn’t ask any follow-up questions. He said that Mr. Chappell seemed familiar with the discovery and he felt like Mr. Chappell was walling Mr. Rucker off intentionally as an investigator would, to protect the investigation.

That last detail — that Chappell seemed familiar with the discovery — is key. In fact, the emails sent in advance of the February 18, 2016 meeting reveal that several weeks earlier, Rucker had already shared this anomaly with Chappell, and Chappell had told him then that he already knew about it.

Along with making accusations about Strzok, Rucker changed his story about how strongly he believed that he had found something significant. The day before Chappell told him the FBI was already aware of the email, Rucker had emailed him that the anomaly was probably nothing.

Additionally, he wanted me to run something that I found in my research of the email metadata past you or someone on the team. It’s probably nothing, but we would rather be safe than sorry.

But when interviewed last year by Senate staffers seeking more evidence against Strzok, Rucker claimed that until a news report explained the anomaly in 2018, he had 90% confidence he had found evidence that China had hacked Hillary’s home server, and still had 80% confidence after learning the FBI had explained it.

Rucker: Mr. Rucker said that he didn’t find any evidence in the remainder of the email review they conducted, but that based on the subpoena issued by the FBI in June 2016 which he learned about this year through a news article, it decreased his confidence level from 90% to 80%.

Meanwhile, the one other ICIG employee interviewed last year, Jeanette McMillan, described what Rucker claimed was dismissiveness as adopting a poker face.

[T]hey provided the information to Mr. Strzok who found it strange. Even before their meeting with Mr. Strzok, Dean Chappell of the FBI informed them that he was aware of the Carter Heavy Industries email address. She said that she doesn’t know whether Mr. Chappell knew before they dropped off the original packet in January 2016, or if he learned of it afterward. News of this email address being found on Secretary Clinton’s emails wasn’t shocking to them, she said, but they took It seriously.

[snip]

[T]he FBI employees in attendance were “poker faced.”

In other words, what the backup released last week actually shows is a tremendous waste of time trying to second guess what the FBI learned with the backing of subpoenas and other investigative tools. To cover over this waste of time, Grassley and Johnson instead pitch this as a shift in their investigation, this time to examine claims that Strzok wasn’t concerned about State arguing that emails weren’t classified (and probably an attempt to examine the document, believed to be a fake, suggesting Loretta Lynch would take care of the Hillary Clinton investigation).

Staff from the Intelligence Community Inspector General’s office (ICIG) witnessed efforts by senior Obama State Department officials to downplay the volume of classified emails that transited former Secretary Hillary Clinton’s unauthorized server, according to a summary of a bipartisan interview with Senate investigators.

In fact, in the “summary” released, McMillan told Senate investigators that, “If anything, there were problems at State with upgrading of information,” exactly the opposite of what Grassley and Johnson claim in their press release.

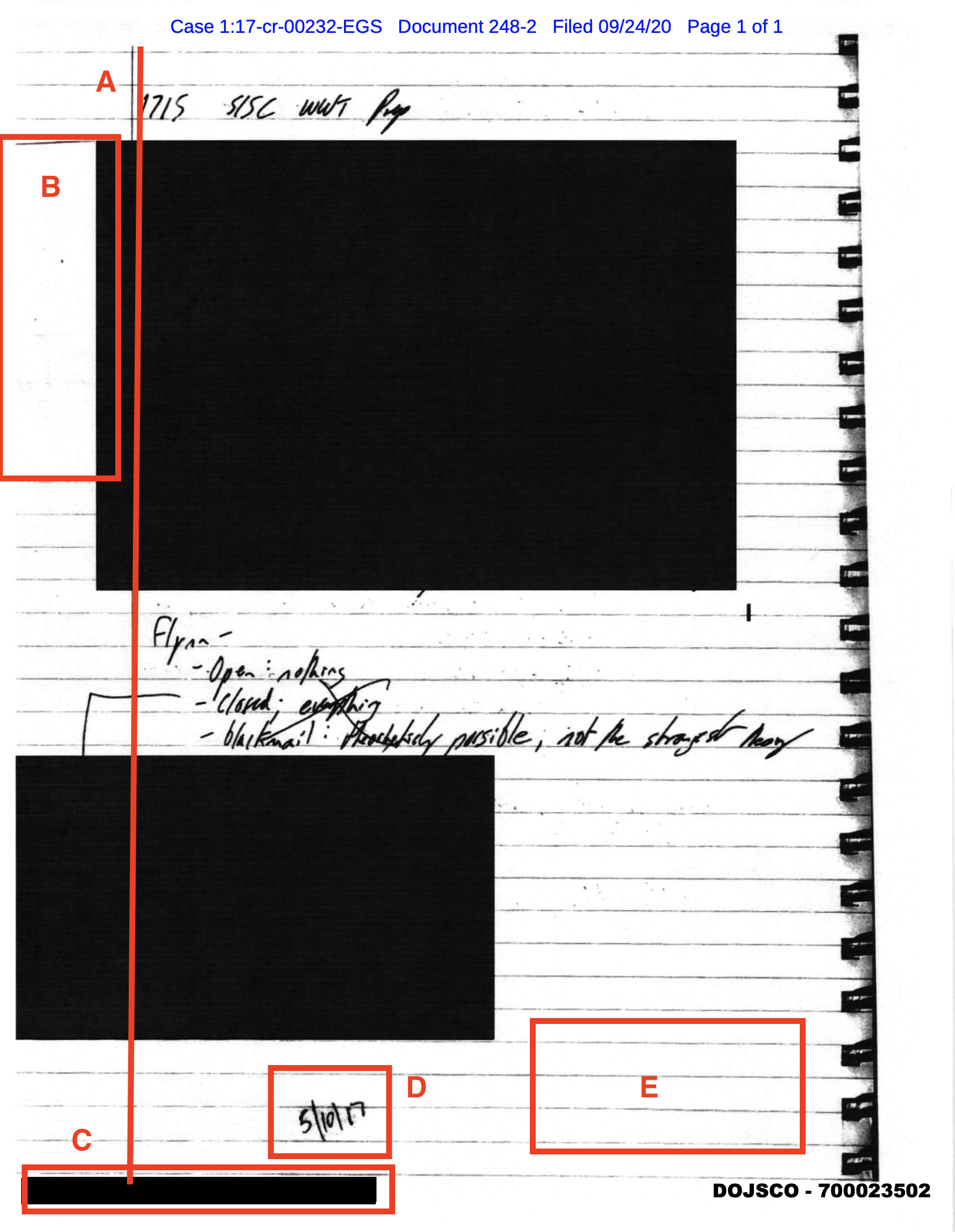

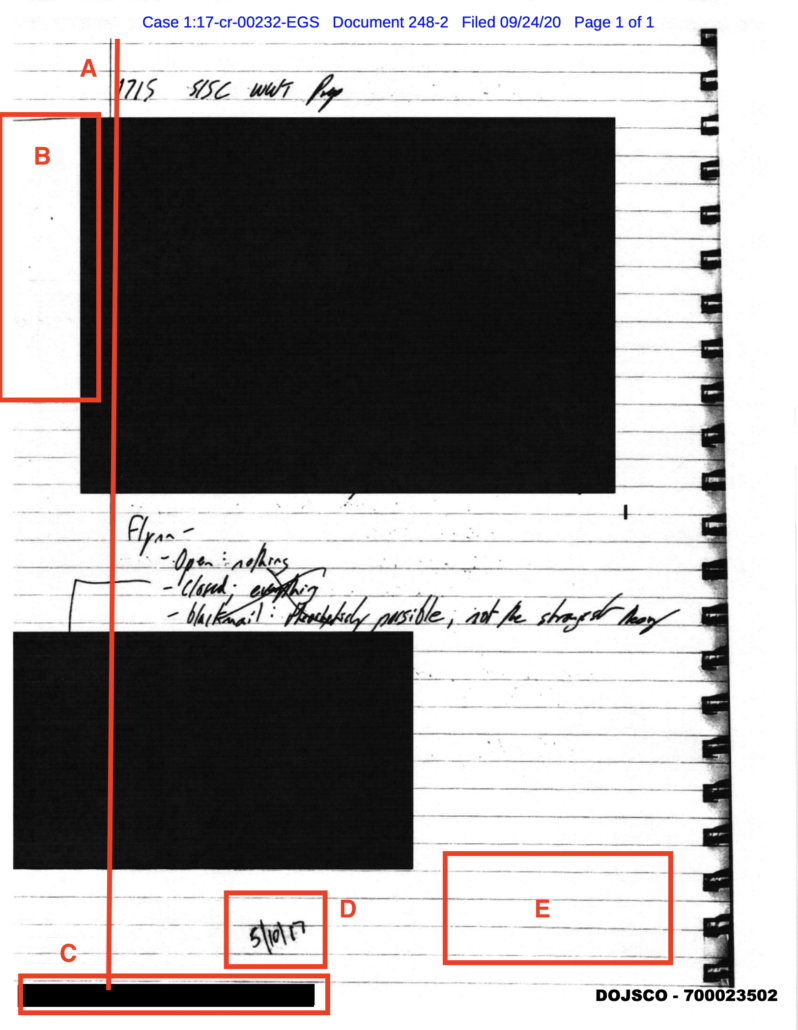

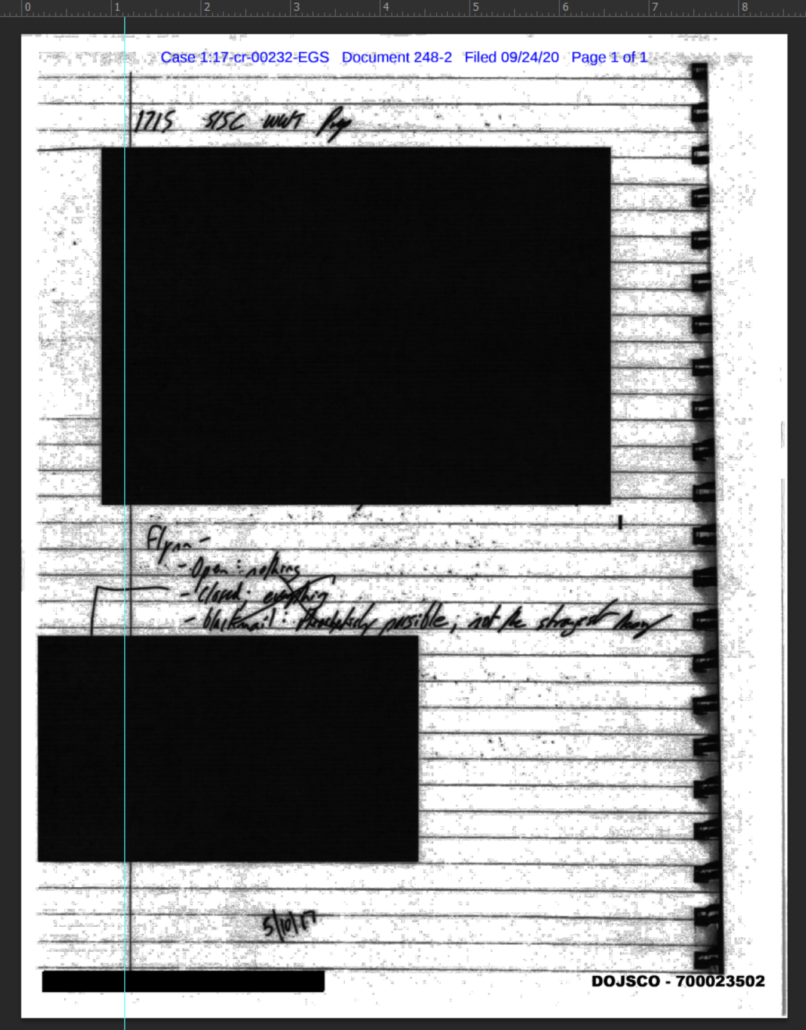

And that word — summary — should raise a lot of questions. It’s not a transcript; in most cases, the report is a paraphrase of what the witnesses said. Moreover, it’s only a “summary” of what Majority staffers asked. Minority staff questions were not included at all, as best demonstrated by this nearly hour-long gap in the “summary.”

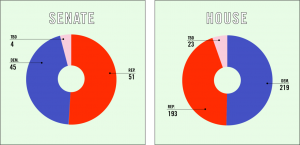

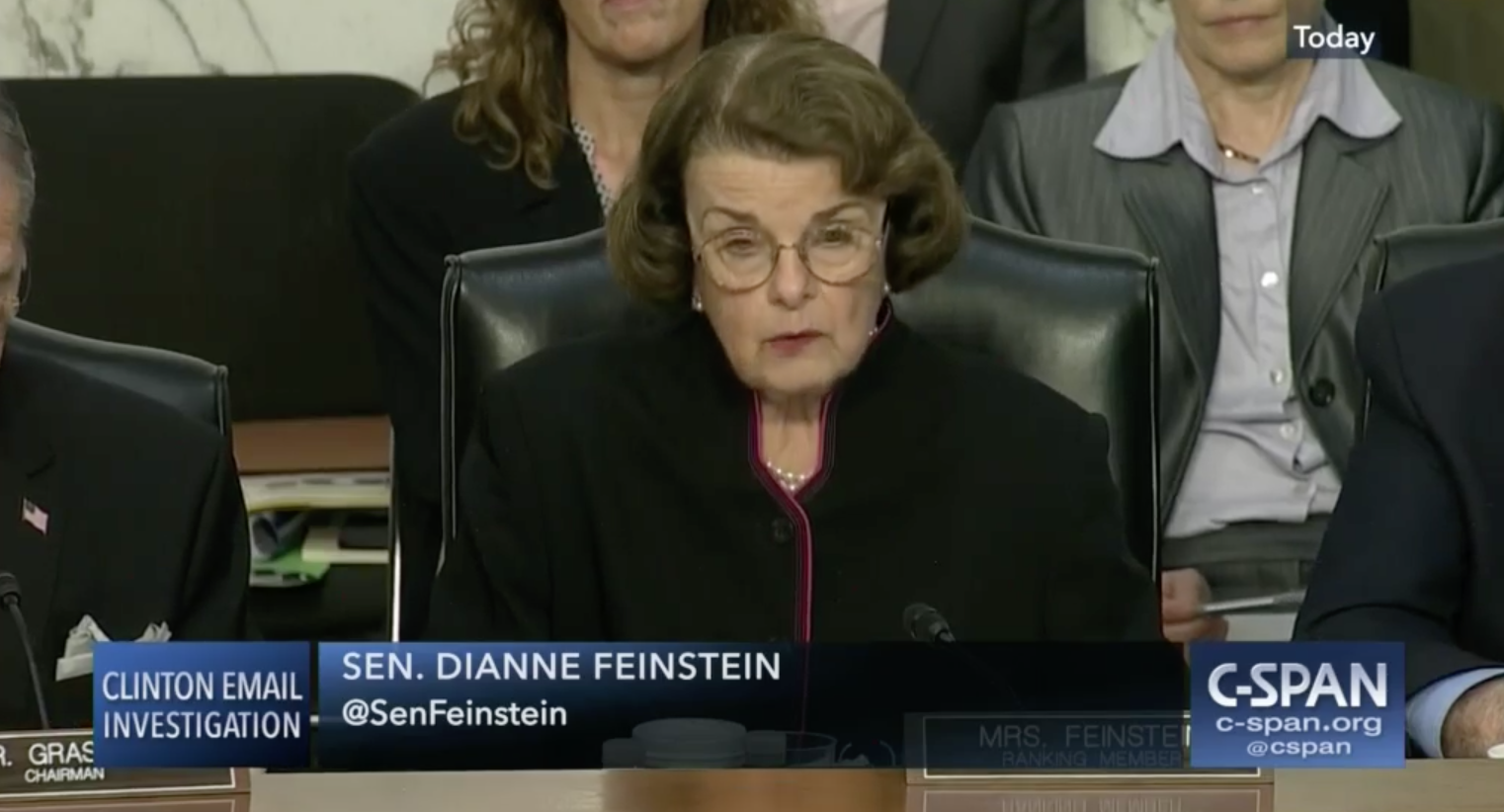

Because of the way Grassley brought this “investigation” with him when he assumed the Chairmanship of the Finance Committee, this release — from Chuck Grassley as Finance Committee Chair and Ron Johnson as Homeland Security Chair — effectively did not involve the Ranking members of the committees that did the work — Dianne Feinstein as Judiciary Ranking member and Claire McCaskill as HSGAC Ranking member.

To put what a colossal misuse of taxpayer funds this is, consider, first of all, that Grassley has been pursuing this for over four years.

Last fall, Majority staffers actually asked Rucker how ICIG came to be involved in the Hillary investigation.

How did ICIG come to be involved with the Secretary Clinton email investigation?

Rucker: – Mr. Rucker said ·that on March 12, 2015, the Senate sent a letter to ICIG requesting assistance regarding a Russian hacker who allegedly broken into Sidney Blumenthal’s email account. He said that the Sidney Blumenthal emails looked legitimate and were not at the SSRP level. He said that [redacted], a former CIA employee who worked with Blumenthal, was the author of most of the material. That was determined in part, he said, based on his writing style. Shortly after wards, he said, ICIG received another Senate request for assistance, this timein relation to the email practices of several former Secretaries of State including Secretary Clinton. He said that through ICIG, he was brought in to assist State in reviewing the email information in June 2015.

But they knew the answer to that. As their own staffers tacitly reminded Grassley and Johnson, Grassley has been pursuing this since 2015.

Your investigation began in March 2015 with an initial focus on whether State Department officials were aware of Secretary Clinton’s private server and the associated national security risks, as well as whether State Department officials attempted to downgrade classified material within emails found on that server. For example, in August 2015, Senator Grassley wrote to the State Department about reports that State Department FOIA specialists believed some of Secretary Clinton’s emails should be subject to the (b)(1), “Classified Information” exemption whereas attorneys within the Office of the Legal Advisor preferred to use the (b)(5), “Deliberative Process” exemption. Whistleblower career employees within the State Department also reportedly notified the Intelligence Community that others at State involved in the review process deliberately changed classification determinations to protect Secretary Clinton.1 Your inquiry later extended to how the Department of Justice (DOJ) and Federal Bureau of Investigation (FBI) managed their investigation of the mishandling of classified information.

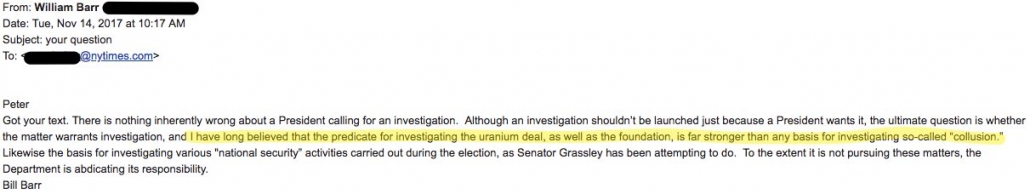

That means that this effort — to misrepresent an interview conducted in December as a way to introduce new (and obviously bogus) allegations against Strzok — is a continuation of Barbara Ledeen’s efforts to prove some foreign government had hacked Hillary’s home server, as laid out in the Mueller Report.

Ledeen began her efforts to obtain the Clinton emails before Flynn’s request, as early as December 2015.268 On December 3, 2015, she emailed Smith a proposal to obtain the emails, stating, “Here is the proposal I briefly mentioned to you. The person I described to you would be happy to talk with you either in person or over the phone. The person can get the emails which 1. Were classified and 2. Were purloined by our enemies. That would demonstrate what needs to be demonstrated.”269

Attached to the email was a 25-page proposal stating that the “Clinton email server was, in all likelihood, breached long ago,” and that the Chinese, Russian, and Iranian intelligence services could “re-assemble the server’s email content.”270 The proposal called for a three-phase approach. The first two phases consisted of open-source analysis. The third phase consisted of checking with certain intelligence sources “that have access through liaison work with various foreign services” to determine if any of those services had gotten to the server. The proposal noted, “Even if a single email was recovered and the providence [sic] of that email was a foreign service, it would be catastrophic to the Clinton campaign[.]” Smith forwarded the email to two colleagues and wrote, “we can discuss to whom it should be referred.”271 On December 16, 2015, Smith informed Ledeen that he declined to participate in her “initiative.” According to one of Smith’s business associates, Smith believed Ledeen’s initiative was not viable at that time.272

[snip]

In September 2016, Smith and Ledeen got back in touch with each other about their respective efforts. Ledeen wrote to Smith, “wondering if you had some more detailed reports or memos or other data you could share because we have come a long way in our efforts since we last visited … . We would need as much technical discussion as possible so we could marry it against the new data we have found and then could share it back to you ‘your eyes only.'”282

Ledeen claimed to have obtained a trove of emails (from what she described as the “dark web”) that purported to be the deleted Clinton emails. Ledeen wanted to authenticate the emails and solicited contributions to fund that effort. Erik Prince provided funding to hire a tech advisor to ascertain the authenticity of the emails. According to Prince, the tech advisor determined that the emails were not authentic.283

Remember, Ledeen was willing to reach out to hostile foreign intelligence services to find out if they had hacked Hillary, and she joined an effort that was trawling the Dark Web to find stolen emails. She did that not while employed in an oppo research firm like Fusion GPS, funded indirectly by a political campaign, but while being paid by US taxpayers.

Chuck Grassley is now Chair of the Finance Committee, the Committee that should pursue new transparency rules to make it easier to track foreign interference via campaign donations. Ron Johnson is and has been Chair of the Homeland Security Committee, from which legislation to protect elections from foreign hackers should arise.

Rather than responding to the real hacks launched by adversaries against our democracy, they’re still trying to find evidence of a hack where there appears to have been none, four years later.

Update: For some reason I counted 2015-2019 as five years originally. That has been fixed.